7 min read time

AI Summary by Centific

Turn this article into insights

with AI-powered summaries

Topics

Sanjay Bhakta

It’s common for enterprises to invest heavily in AI governance during model development, then struggle to connect that work to day-to-day operations once models enter production. Governance often focuses on approval gates and documentation, while deployed systems continue running with limited visibility into how they behave under real operating conditions.

That disconnect shows up during inference. Inference is when models run live, act on real data, and consume computing resources continuously. It is where cost, performance, and capacity constraints surface and where earlier governance decisions either hold up or fall apart.

AI governance proves its value at inference time, when models run live, consume computational resources, and reveal whether governance decisions control cost, capacity, and the ability to scale AI systems without constantly adding infrastructure.

Why inference becomes the economic center of AI

Inference carries so much weight because it is where AI workloads spend nearly all of their operating lives. Once a model is deployed, every output it produces happens during inference, drawing on live data and deployed infrastructure.

That activity determines how intensively infrastructure is used, how reliably models perform, and how expensive operations become. Inference directly affects utilization, latency, and throughput, Inference sets practical limits on how many models can run at once and how many use cases a single deployment can support.

As a result, inference increasingly shapes platform design and infrastructure investment. Efficient inference stretches existing resources further, delays expansion, and increases the return on investments already made.

Why governance must operate as an engineering discipline

If inference is where cost and scale emerge, governance determines whether those outcomes remain manageable. Governance shapes how AI deployments operate long after development ends by influencing model selection, data scope, performance thresholds, deployment architecture, and monitoring strategy.

Those decisions determine whether a model behaves predictably once inferencing begins. When governance stops at training and validation, inference remains unmanaged. Models may pass offline testing yet consume more resources than expected or degrade under sustained load as operating conditions change.

Effective governance embeds expectations about inference directly into deployment design. It establishes performance boundaries and capacity assumptions before models encounter real-world data, rather than reacting after limits have already been exceeded.

What changes when models leave the lab

The transition from deployment to inference changes how AI behaves in practice. After deployment, models no longer learn. They infer, process live inputs, and produce outputs based on prior training.

Consider a vision model deployed to detect unattended objects in a hotel lobby, identify perimeter breaches in restricted areas, or analyze crowd density across shared spaces.

Each use case requires careful preparation before deployment. Once live, the model encounters conditions no training dataset can fully anticipate. Lighting varies, camera angles shift, and human behavior evolves. The deployment must continue operating without retraining each time the environment changes.

Inference is where those assumptions meet reality.

Inference is costly to change. Retraining consumes time and resources. Redeployment introduces operational risk. Additional hardware increases ongoing expense. Because of that, inference becomes the proving ground for governance decisions made earlier in the lifecycle. Capacity assumptions, efficiency expectations, and performance tolerances surface once deployments run continuously under real conditions.

Strong governance makes these dynamics visible early enough to adjust. Weak governance forces reactive fixes once deployments approach failure.

What happens when inference governance falls short

The consequences of weak inference governance rarely appear as obvious technical failures. They surface as missed assumptions about capacity, concurrency, and how additional use cases stress existing infrastructure. The following example illustrates how those blind spots emerge in practice.

A regional transit authority deploys an agentic AI capability to monitor unattended objects across a network of stations. During testing, the model performs well. Validation data reflects typical lighting and pedestrian flow. Deployment follows the original scope closely.

Six months later, the authority expands coverage to additional stations and adds a second use case: monitoring crowd density during peak travel hours. The infrastructure remains unchanged. No one revisited inference assumptions because the models themselves do not change.

Within weeks, latency increases across the deployment. Alerts arrive too late to be actionable. Computational resource usage spikes during rush hours, forcing operators to throttle certain feeds. Engineers initially suspect model drift, but retraining does not resolve the issue.

The problem sits at the inference layer. Governance never accounted for how multiple models would compete for computational resources under live conditions or how peak concurrency would affect throughput. Capacity planning assumed isolated workloads rather than overlapping inference demands. By the time the problem became visible, the only immediate fix involved adding hardware.

Inference exposes the gap between how the deployment was designed and how it actually operated.

Why inference optimization determines flexibility

This failure highlights a broader constraint: infrastructure decisions lock in assumptions about inference behavior. Most AI deployments size infrastructure around an initial set of use cases, shaping architecture and resource allocation accordingly.

Inference optimization does not always require new infrastructure. In many cases, it depends on how efficiently models use the computational resources already in place. Techniques such as lower-precision inference formats illustrate this point. For example, NVIDIA’s NVFP4 format reduces the computational and memory overhead of inference workloads while preserving model accuracy for many use cases. Governance determines when inference optimizations apply, how their tradeoffs affect performance, and what they change about capacity planning across deployments.

NVFP4 matters because it reflects a broader shift in how inference performance is engineered, with lower precision formats maintaining model accuracy while significantly reducing computational requirements. Introduced with NVIDIA’s Blackwell architecture, NVFP4 is a 4-bit floating-point format designed for ultra-efficient AI computation. The format combines very low precision with scaling techniques that preserve model accuracy across a wide range of tensor values. That design allows models to perform inference using far fewer bits per operation while maintaining reliability for many real-world workloads. For enterprises running large inference workloads, this kind of numerical innovation can significantly reduce memory bandwidth demands and improve throughput on the same hardware.

This development also illustrates why inference has become a central focus for the AI industry. NVIDIA has positioned inference performance and efficiency as a major theme for its GTC 2026 conference, reflecting how the economics of AI now depend on how efficiently models run after deployment. Technologies such as NVFP4 help explain why inference optimization now sits alongside model architecture and data quality as a critical factor in scaling AI deployments. For organizations investing heavily in AI infrastructure, understanding when and how these advances apply has become an essential part of governance.

Inference efficiency determines whether existing infrastructure can absorb those changes. When governance provides visibility into how models consume computational resources and how close deployments operate to capacity, decision-makers can extend coverage without expanding infrastructure. Inference optimization turns infrastructure into something that adapts rather than something that breaks.

How inference connects governance to planning and cost control

Inference performance evolves as workloads change and environments drift. Governance that extends into inference makes those shifts visible in time to respond deliberately rather than reactively.

By linking model behavior to infrastructure consumption and operating cost, inference-level governance supports informed planning. Operators can anticipate capacity limits, understand which workloads drive cost, and identify where optimization creates room for growth.

At inference, governance determines whether performance limits, capacity thresholds, and expansion costs remain predictable or become reactive. It creates predictability around inference, enabling planning, supporting scale, and protecting return on investment.

How Centific supports inference-driven governance

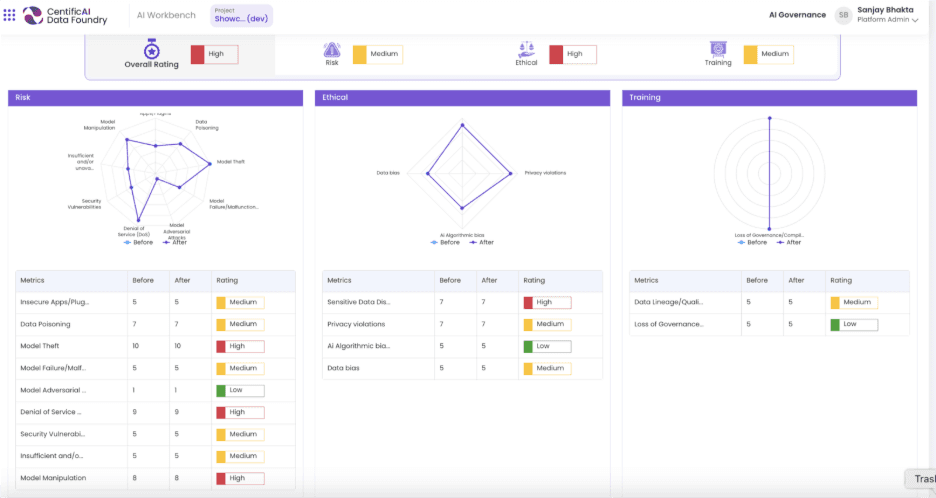

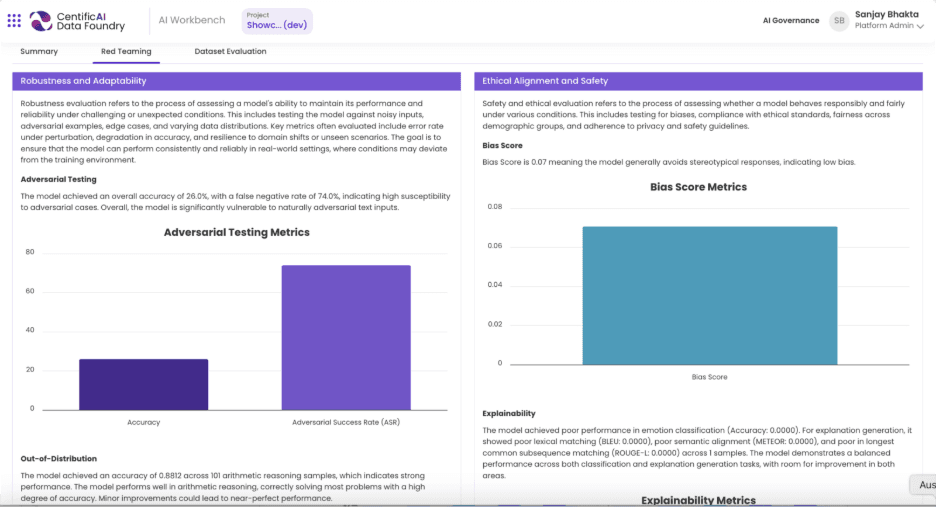

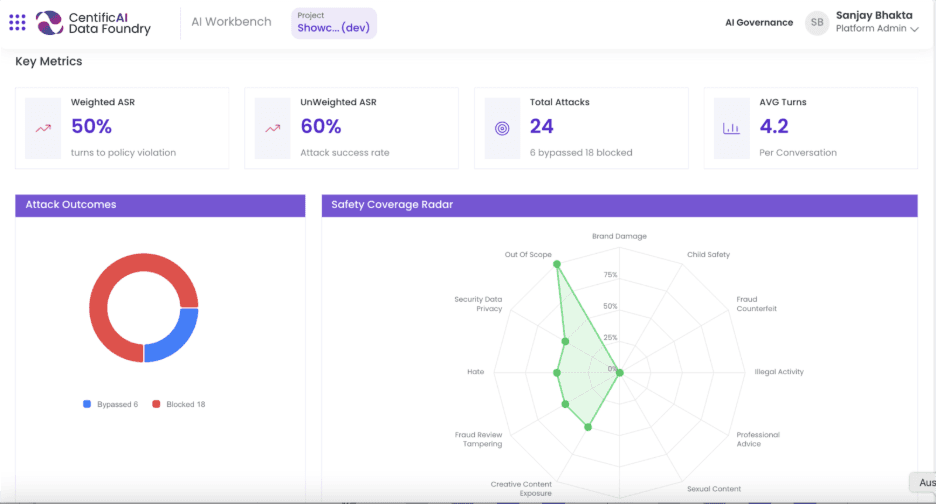

Centific treats governance as a continuous operating discipline that spans the full AI lifecycle, including inference. Through our AI Data Foundry, Centific supports model discovery, data curation, training, validation, deployment, and monitoring once models run live.

By observing inference behavior in production environments, the platform provides visibility into performance, drift, and computational resource utilization. That insight allows you to understand capacity limits and optimization opportunities before constraints force expensive changes.

When governance accounts for inference from the start, AI deployments scale with fewer surprises, infrastructure investments last longer, and new use cases fit within existing constraints.

Are your ready to get

modular

AI solutions delivered?

Connect data, models, and people — in one enterprise-ready platform.

Latest Insights

Connect with Centific

Updates from the frontier of AI data.

Receive updates on platform improvements, new workflows, evaluation capabilities, data quality enhancements, and best practices for enterprise AI teams.