23 min read time

AI Summary by Centific

Turn this article into insights

with AI-powered summaries

Topics

Centific Physical AI Center of Excellence

The humanoid robotics race is accelerating. but much of the industry is optimizing for demonstration rather than deployment. Teleoperated humanoids make compelling marketing moments, yet they are not autonomous, resilient, or safe enough for real production environments.

Building a robot is no longer the hardest part. Training it to perform real work, safely and reliably, is where progress still stalls.

Across CES 2026 and similar showcases, dozens of humanoid robots have demonstrated impressive mobility. They have walked across stages, carried boxes, and completed carefully choreographed tasks. Those demonstrations are technically impressive, but they obscure a deeper truth: the gap between a humanoid that moves and a humanoid that is useful remains enormous.

The bottleneck is not hardware. It is not even an algorithm. The problem is the absence of large-scale, high-quality training data for real-world physical interaction with people.

Why This Matters Now

The urgency around humanoid robotics results from several technological trends converging at the same time:

Humanoid hardware is finally reaching practical maturity. Advances in actuators, sensors, and control systems mean robots can now move with stability and dexterity that was difficult even a decade ago.

Simulation platforms are scaling robot training. Tools like NVIDIA Isaac Sim allow researchers to generate thousands of training scenarios, enabling robots to practice tasks in virtual environments before deployment.

Robot foundation models are emerging. Models such as NVIDIA GR00T and other vision-language-action architectures provide a starting point for learning general robot behaviors across different embodiments.

These developments make large-scale humanoid deployment technically plausible for the first time.

But without massive real-world training datasets, humanoid robots will remain impressive demonstrations rather than reliable systems capable of operating in real environments.

The uncomfortable truth about humanoid AI

Watch humanoid demos closely. The robots are walking, picking up objects, or manipulating rigid items in controlled environments through teleoperation. Occasionally, a deformable task appears, such as folding a towel, but only under ideal conditions.

Simple objects like apples and tennis balls see near-100% success rates; complex tools like screwdrivers and scissors drop to around 30%.

We do not need humanoid robots to compose music, generate art, cook our meals, or brew our morning coffee. AI systems that live entirely in software already do those things and will soon do more.

Humanoids exist to do what humans cannot or should not do

Humanoid robots are not meant for novelty tasks. Their real purpose lies in the work that breaks bodies, the environments too toxic, too hot, or too confined for a person to safely enter, and the precision jobs that demand hours of sustained force control without fatigue. Much of this work remains dangerous today, contributing to hundreds of thousands of injuries each year because there has been no viable alternative.

Think of the electrician re-entering a live panel after a near-miss. The pipefitter working in a confined space with inches of clearance and zero ventilation. The wildland firefighter cutting containment lines while the fire roars uphill. The nuclear plant technician handling material that will remain lethal for a thousand years.

These are the tasks humanoids must learn to perform.

Now consider tasks that matter in real deployments. Would you trust humanoid robots to assist with airport security screening, support a clinician during a medical procedure, or help an elderly person stand up safely after a fall?

Those scenarios require sustained physical contact with humans, precise force control, real-time adaptation, and judgment under uncertainty. Today’s humanoids are far from meeting those requirements.

The distance between a humanoid and a useful humanoid is measured in millions of training examples that do not yet exist.

The robotics training data problem nobody wants to talk about

Physical AI faces a constraint that language and vision AI have not. Large language models have been trained on the written output of humanity with trillions of words, scraped, aggregated, and refined. Visual models have been trained with images captured and labeled over decades. Physical AI training data does not exist on the internet.

Humanoid robots require synchronized streams of vision, depth, force, torque, and tactile feedback, captured at millisecond precision while actions are performed in the physical world. They require accurate action trajectories, recovery behaviors, and exposure to failure. None of this can be scraped or cheaply generated.

Every meaningful demonstration must be collected deliberately, in real environments, one episode at a time. As Joy Yang argues in The Strange Review (March 2026), the hand has become the gating constraint for the entire humanoid industry. That gap is a training data problem, not primarily a hardware problem.

This is why NVIDIA, Meta, Google, Stanford, and major robotics labs are building data collection infrastructures. As robotics shifts from programmed robots to learning-based systems, training data becomes the obstacle and the key competitive differentiator.

The scale of the problem

The imbalance between digital AI data and physical AI data is stark. Language models trained on trillions of tokens. Vision systems learned from billions of images. Robot learning datasets remain comparatively microscopic.

Dataset | Scale |

DROID (2024) | 76K trajectories, 564 scenes |

Open X-Embodiment / RT-X (2023) | ~1M trajectories, 22 robot embodiments |

BEHAVIOR-1K (2024) | 1,000 household activities (primarily simulation) |

RoboTurk (2019) | Crowdsourced demos, limited scaling |

GPT-4 Training Text | 45 TB — the comparison that reveals the gap |

The world’s robot learning datasets contain perhaps a few million demonstrations. Compare that to the 45TB of text data used to train GPT-4.

Put another way: we’re trying to teach robots to navigate the physical world with 0.0001% of the data we used to teach AI to write emails.

That is why progress can look impressive in demos and still feel fragile in anything resembling deployment.

The industrial skills gap: factory SOPs, electricians, and the trades datasets nobody is building

Industrial environments reveal another side of the training problem: vast amounts of operational knowledge exist, but almost none of it is captured in a format robots can learn from.

The humanoid robot conversation is dominated by warehouses and household tasks. But some of the highest-value deployment environments for humanoid robots are industrial settings where skilled trades operate: electrical panels, HVAC systems, manufacturing lines, and process facilities. These environments have something most robot training pipelines lack entirely: decades of written standard operating procedures (SOPs), safety protocols, and task breakdowns developed for human workers. The challenge is that this institutional knowledge has never been translated into the multimodal, action-labeled format that physical AI requires.

Factory SOPs as latent training signal

Manufacturing facilities have accumulated SOPs over decades. A tier-1 automotive supplier may have thousands of work instructions covering torque sequences, inspection checkpoints, tool changeovers, and lockout/tagout procedures. A semiconductor fab has processed recipes specifying exact motion paths for wafer handling with sub-millimeter tolerances. These documents encode physical task knowledge at a level of detail that no internet-scraped dataset can replicate.

The gap is that SOPs describe what to do in text, not how to do it in motion. Bridging that gap requires pairing each SOP step with teleoperated demonstrations, synchronized sensor streams, and—critically—recovery demonstrations for when the step goes wrong. A torque sequence that assumes nominal bolt seating requires a very different training signal when a fastener cross-threads. Organizations sitting on large SOP libraries have a significant and largely untapped foundation for robot training data, but only if they invest in the collection infrastructure to activate it.

Carpentry and construction trades

Carpentry presents a microcosm of everything that makes skilled-trade data collection hard: material variability, tool diversity, force-dependent outcomes, and judgment calls that experienced craftspeople make instinctively but rarely articulate. A carpenter sizing a mortise-and-tenon joint applies different chisel pressure depending on wood grain orientation. This judgment takes years of tactile experience to internalize. No text SOP has ever captured that experience.

The electrician problem: dexterity, judgment, and situational variability

Electrical trades represent one of the most robotics-relevant skilled labor categories, and one of the most data-scarce. An electrician performing a panel termination must strip wire to a precise length, identify conductor gauge by feel and visual inspection, route cables through conduit without damaging insulation, and torque terminals to spec, all while reading a wiring diagram, navigating a live panel safely, and adapting to non-standard installations that differ from every blueprint. This is a task requiring tool manipulation, force control, visual reasoning, procedural memory, and in-context judgment. No public dataset covers it at scale.

The same pattern applies across the skilled trades. An HVAC technician diagnosing a refrigerant leak must reason from sensor readings, visual inspection, and past failure patterns simultaneously. A pipefitter positioning a joint must account for thermal expansion, alignment tolerances, and access constraints in the same motion sequence. These tasks share a common structure: they are procedurally defined but situationally variable. The SOP tells you the steps. The craftsperson tells you how to execute them under real conditions. Capturing the gap between the written procedure and the physical execution is exactly what robot training data must do, and it requires collecting demonstrations from skilled workers in the field, not just in labs.

What an industrial robotics dataset requires

Building an industrial robotics dataset differs from typical robot learning data collection in several dimensions that are rarely discussed. Tool interactions are a primary challenge: unlike pick-and-place with unpowered objects, industrial tasks involve powered tools, calibrated instruments, and specialized end-effectors.

Each introduces its own force-feedback signature and failure mode. A torque wrench that slips off a fastener head, a wire stripper that nicks insulation, a multimeter probe that contacts the wrong terminal are the most consequential recovery scenarios, and they are exactly what a lab demonstration cannot reproduce. Worker expertise becomes a data quality variable. A journeyman electrician and an apprentice will execute the same panel termination with measurably different motion profiles, force application, and error correction strategies. Capturing expertise gradients, not just successful completions, produces richer training signals.

Site variability is the third dimension. Industrial environments are not static. Conduit runs differ between buildings. Panel configurations deviate from drawings. Ambient temperature affects material compliance.

A useful industrial dataset must span real sites, not just representative mockups. NVIDIA’s Omniverse NuRec addresses part of this by enabling rapid photorealistic reconstruction of real facilities from photo captures. But visual fidelity alone does not capture the contact physics variation that industrial task recovery depends on.

The skilled trades are facing a generational workforce gap. In the United States alone, the electrical industry is projected to need hundreds of thousands of additional workers over the next decade as experienced tradespeople retire. Robotics is part of the long-term answer. But robots cannot perform work they were never trained on. Bridging the data gap for industrial tasks requires partnerships between robotics labs, trades training programs, and the companies that employ skilled workers. And it requires building that data infrastructure now, before deployment timelines make the gap impossible to close.

What “difficult” means

The difference between demonstration and deployment becomes clearer when we look at tasks that must work in real-world conditions.

Security screening: the ultimate stress test

Consider the challenge of training a humanoid to assist with TSA-style security screening. Not replacing human officers but augmenting them. Handling the repetitive physical aspects while humans focus on judgment calls.

This is an active area of development. And it’s extraordinarily difficult.

A screening action requires:

Contact force modulation: 2-5 Newtons of pressure, enough to detect concealed objects, not enough to cause discomfort

14 body zones covered systematically while respecting prohibited areas

Multi-modal perception: visual (passenger pose, clothing folds), tactile (12,000+ sensor readings/second), force/torque (six-axis feedback)

Real-time adaptation: passengers breathe, shift, tense up. The robot must maintain appropriate contact through continuous movement

Edge case detection: distinguish between a metal chain, a prosthetic limb, a concealed weapon, and an insulin pump

Force tolerance: ±0.5N. One wrong calibration and you've either missed a threat or caused a complaint. Where do you get 50,000 demonstrations of this task with synchronized multi-modal sensor data?

Medical assistance: force-sensitive human contact

The Surgie project at UC San Diego (Atar et al., 2025) has demonstrated humanoid teleoperation for medical procedures like ultrasound guidance, emergency airway management, and physical examinations. Their findings reveal both promise and sobering reality.

The Unitree G1 humanoid, controlled via bimanual teleoperation, successfully positioned ultrasound probes with appropriate pressure, assisted with bag-valve-mask (BVM) ventilation, and performed basic needle insertion tasks

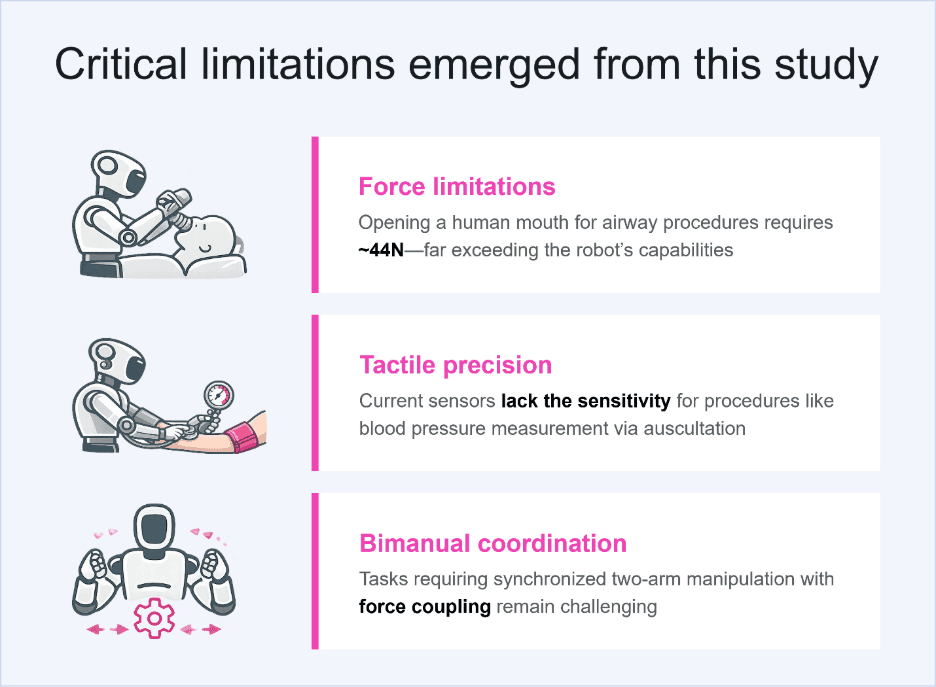

Critical limitations emerged from this study:

Force limitations: Opening a human mouth for airway procedures requires ~44N—far exceeding the robot’s capabilities

Tactile precision: current sensors lack the sensitivity for procedures like blood pressure measurement via auscultation

Bimanual coordination: tasks requiring synchronized two-arm manipulation with force coupling remain challenging

As Professor Michael Yip argues in Science Robotics (July 2025): “The scale of data required to train a truly capable AI to perform surgery with today’s robots would be too labor-intensive and cost-prohibitive with existing platforms.”

If humanoid robots in industrial settings can build strong AI foundation models through massive data collection, those capabilities could transfer to medical settings. A robot that learns to fold towels with two hands is learning bimanual coordination—the same fundamental skill needed to hold an ultrasound probe while inserting a needle.

Household manipulation: the deceptively simple

Folding a towel seems simple. It’s not. Consider:

Deformable object manipulation: fabric doesn't have a fixed shape. Every fold changes the state space

Bimanual coordination: two arms must work in concert with precise timing

Visual occlusion: one hand often blocks the camera’s view of what the other hand is doing

Recovery from failure: a bunched towel requires unfolding and restarting, not just “trying again.”

The BEHAVIOR-1K benchmark includes 1,000 household activities, from “clean a bathroom” to “pack a suitcase.” These aren’t single actions; they’re long-horizon tasks requiring dozens of coordinated sub-actions, each of which needs its own training data.

Eldercare: stakes higher than warehouse picking

When a robot assists an elderly person in standing up from a chair, the force profile must be precisely calibrated:

Too little support, and the person falls

Too much force results in injury, bruising, fear

Wrong timing results in loss of balance

Wrong contact points cause discomfort or harm

And unlike a warehouse where a dropped package means a dented box, a fall can mean a broken hip, hospitalization, or worse.

The regulatory and liability landscape for human-contact robotics is still being written. TSA/FAA/IATA have no established framework for robotic security screening. FDA pathways for humanoid medical assistants are undefined. Technology is advancing faster than governance.

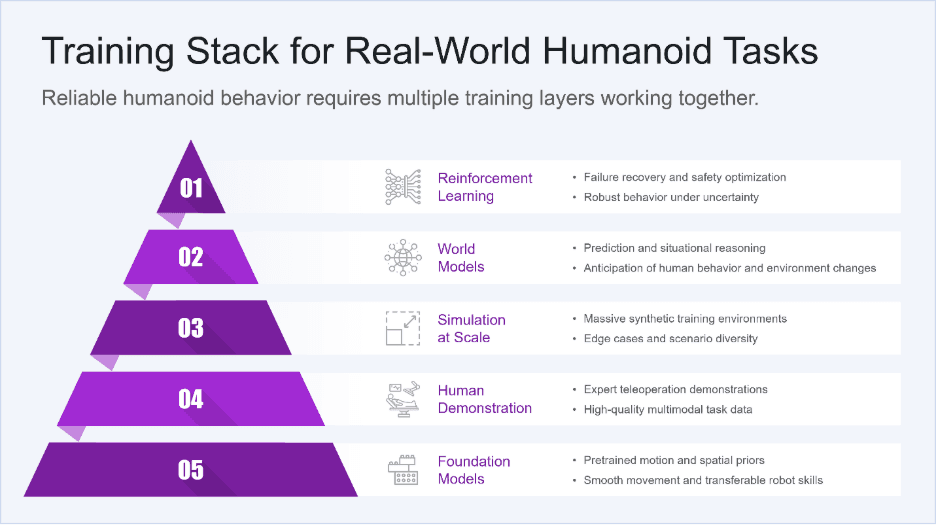

The training stack for real-world tasks

Across security, healthcare, household assistance, and eldercare, a consistent pattern emerges. Useful humanoid behavior requires more than motion. It requires perception, force control, anticipation, recovery, and governance working together as a system.

This is why isolated demos do not translate into deployable capability. The challenge is not performing a task once. It is performing it safely, repeatedly, across people, environments, and edge cases. Here’s what works for training humanoids on complex, contact-rich, judgment-intensive tasks:

Layer 1: foundation models (the starting point)

You don’t train from scratch. Foundation models like NVIDIA GR00T provide humanoid motion priors learned from 100+ different robot embodiments. This gives you:

Smooth, natural-looking movement

Basic spatial reasoning

Transfer learning from millions of existing demonstrations

But GR00T doesn’t know what “frisking” or “ultrasound guidance” means. That’s where task-specific training begins.

Layer 2: human demonstration (the bottleneck)

Teleoperation systems like FastUMI, Mobile ALOHA, and JoyLo allow human experts to demonstrate tasks while robots record every sensor stream.

The Surgie project uses HTC Vive trackers and webcam-based hand pose estimation to capture high-fidelity bimanual demonstrations. Custom grasping configurations let operators switch between holding a scalpel, a stethoscope, or an ultrasound probe.

Quality matters more than quantity. One well-annotated demonstration with synchronized multi-modal data is worth 100 noisy recordings. The Centific GAZE pipeline (Krishna et al., NeurIPS 2025) demonstrates how AI-assisted pre-annotation can achieve 28%-32% reduction in human review time while maintaining annotation quality.

Layer 3: simulation at scale (the multiplier)

Real demonstrations are expensive. Simulation is cheap, but the “reality gap” can be fatal. In NVIDIA Isaac Sim, Centific Physical AI Center of Excellence (PACE) can simulate 100,000+ episodes in a short time frame:

Thousands of synthetic body types

Varied lighting, textures, and physics parameters

Injected edge cases (concealed objects, prosthetics, uncooperative subjects)

Adversarial scenarios that would be impossible to collect in reality

Domain randomization prevents overfitting simulation artifacts. Cosmos Transfer bridges the visual gap with photorealistic rendering.

But simulation-trained policies typically see 15%-30% performance drops when deployed to real robots. Closing this gap requires systematic simulation-to-reality validation—measuring where failures occur and iterating until transfer works. Neural reconstruction tools like NVIDIA Omniverse NuRec (using 3DGUT) can compress environment capture from days of manual modeling to an afternoon of photography, converting real spaces into photorealistic Isaac Sim scenes. Organizations training robots to perform pick and place in real facilities, like warehouses, clinics, care homes, can reconstruct the actual environment rather than approximating it.

However, this creates a subtle but important training challenge: photorealistic geometry does not automatically capture the unpredictable contact physics of a slipping grasp, a rolling object, or an item that shifts under load. A simulation that looks real but behaves ideally will produce policies that fail precisely in the moment of recovery. Closing this gap requires deliberately injecting grasp failure and recovery scenarios into neurally-reconstructed environments, not just running successful demonstrations in realistic scenes.

Layer 4: world models (the anticipation engine)

Using world models like NVIDIA Cosmos-Predict, robots anticipate, not just react:

“This passenger’s posture suggests they'll shift left in 2 seconds”

“The fabric texture indicates possible concealed rigid object”

“Based on tremor patterns, this elderly patient may need extra support”

Cosmos-Reason1 provides chain-of-thought reasoning that creates audit trails, which is critical for security and medical applications where every decision may be reviewed.

This anticipation capability is especially important for seemingly “simple” tasks like pick and place. Recent NVIDIA research makes this concrete: DreamZero (Ye et al., 2026), a World Action Model trained on the AgiBot dataset, found that standard Vision-Language-Action models default to pick-and-place motions regardless of instruction. This finding suggests that they overfit to dominant training behaviors rather than reasoning about the task.

A robot that cannot reason what it is doing also cannot recover when something goes wrong in mid-execution. When a grasped object slips, rotates unexpectedly, or drops entirely, recovery is not a scripted fallback; it requires the robot to re-evaluate the world state, re-plan grasp geometry, and execute a corrective action in real time. World models like Cosmos-Predict and the DreamDojo framework (trained on 44k hours of human egocentric video) give robots the predictive foundation to detect grasp failure before it becomes a drop, and to anticipate corrective trajectories before contact is lost.

Layer 5: reinforcement learning (the safety net)

Imitation learning teaches standard task execution. Reinforcement learning teaches recovery and safety. This distinction matters most for tasks that appear deceptively simple. Pick and place is the canonical “solved” robot task, but DreamZero’s evaluations show that even state-of-the-art VLAs achieve near-zero performance on pick-and-place variants in unseen environments, precisely because they were trained predominantly on successful executions.

Real pick-and-place in production involves dropped objects, slipped grasps, items that roll or deform on contact, and partial occlusions mid-motion. Data collection pipelines must deliberately capture these failure modes: teleoperated recovery sequences after intentional drops, grasp reattempts from perturbed positions, and reactive replanning when contact is lost. Without this failure coverage, reinforcement learning has no signal to optimize from when the happy path breaks down. Centific’s internal operational process recommends training with:

10× sampling weight on edge cases and failures

Safety constraints baked into reward functions (force limits are hard constraints, not soft penalties)

Adversarial training where simulated humans actively test system robustness

The robot learns: “When contact force exceeds the threshold, release immediately. When uncertainty is high, request human assistance. Never guess on a safety-critical decision.”

The human-in-the-loop imperative

What separates research demonstrations from production systems is not dexterity or autonomy, but how responsibility is structured once a system operates around people. In high-stakes, human-contact environments, keeping humans in the loop is a deliberate design choice that shapes system architecture, training, and deployment.

In security screening, realistic near-term deployments rely on a clear division of labor. The robot performs routine, physically repetitive screening actions with consistent force and coverage, reducing fatigue and variability. A human officer observes every interaction in real time through camera and sensor feeds, actively supervising rather than passively monitoring. Any anomaly, including unexpected sensor readings, atypical passenger movement, or elevated model uncertainty, triggers immediate human takeover. All interactions are fully recorded, with synchronized multimodal sensor data retained to support auditability, training refinement, and post-incident review.

This structure reflects an operational reality: robots can deliver consistency and endurance, but ambiguity and context still require human judgment. The system is designed so uncertainty escalates to a person instead of being resolved autonomously.

In medical assistance, the control boundary is even more explicit. A physician is always present and remains responsible for procedural decisions. The robot functions as an extension of the clinician’s physical capabilities, providing steadier positioning, repeatable motion, or controlled force application, without substituting medical judgment. Force limits are hardcoded below established injury thresholds rather than learned dynamically, ensuring conservative behavior under all conditions. Emergency stop mechanisms are always accessible and prioritized in the control stack, not treated as secondary safeguards.

Across both domains, autonomy is intentionally constrained. The objective is not to remove humans from the loop, but to design systems where machine execution and human accountability are clearly separated, enforceable, and auditable.

Full autonomy is not a realistic goal for high-stakes human contact and framing it as such obscures the real work ahead. The opportunity lies in building collaborative systems where human oversight is explicit by design and scaled through infrastructure, not eliminated in pursuit of autonomy for its own sake.

The data infrastructure gap

The fundamental challenge in humanoid AI is data infrastructure, not algorithms. Building a humanoid robot is largely a hardware problem, and the industry is making visible progress on that front. Actuators are improving. Sensors are getting cheaper and more capable. Form factors are converging. What is far less mature is the infrastructure required to train those robots to operate reliably in the physical world, especially around people.

Training a humanoid does not mean collecting a few successful demonstrations in a lab. It requires global networks of data collection facilities equipped with calibrated sensors that behave consistently across locations and platforms. Vision, depth, force, torque, and tactile streams must be synchronized with sub–5 millisecond precision so that actions, contact, and perception can be meaningfully aligned. Small timing errors at this layer cascade into brittle behavior later.

The data itself must span real-world diversity. That means variation across environments, lighting conditions, body types, clothing, mobility patterns, and edge cases that rarely appear in controlled settings. Success cases alone are not sufficient. Failures, recoveries, hesitation, and handoffs matter just as much, particularly for contact-rich tasks where safety and judgment are intertwined.

At this scale, quality assurance becomes a system, not a manual review step. Human demonstrations must be validated, annotated, and audited with consistency. In mature pipelines, first-pass quality matters because downstream correction is expensive. Programs that achieve high first-submission pass rates are not just more efficient; they are more predictable and governable.

Governance infrastructure is inseparable from this process. Physical interaction data often includes personally identifiable information, sensitive imagery, and regulated contexts. PII detection, compliance flagging, and chain-of-custody tracking must be built into the pipeline from the start, not added later. Without that foundation, data cannot be reused, shared, or defended once systems move toward deployment.

The gap between what a demo requires and what production demands is substantial. A compelling demo can be built with a few hundred carefully staged demonstrations, collected over weeks in a lab, on a single robot embodiment, and optimized around success cases. A production system requires tens of thousands of demonstrations collected over years, spanning real-world diversity, transferred across embodiments, and stress-tested on edge cases and failures.

This infrastructure does not yet exist at the scale required for widespread deployment. Until it does, progress in humanoid AI will continue to look impressive on stage and fragile in practice.

What to ask next

When you evaluate humanoid systems for real-world use, the most revealing questions are not about walking or grasping.

Ask how many demonstrations exist for the specific task. Ask how edge cases are represented. Ask what happens when something goes wrong. Ask how human oversight is integrated.

The answers distinguish a demonstration from a deployment path.

The humanoid race is not about who builds the most robots. It is about who builds the data infrastructure to train robots for tasks that matter.

That race has only just begun.

Centific can help

Centific’s physical AI practice enables machines to perceive, reason, and act reliably in complex real-world environments. We combine computer vision, simulation, sensor fusion, and adaptive control across dynamic settings including warehouses, factories, and urban environments. We contribute purpose-built datasets and evaluation frameworks for physical and embodied AI development, including ego-centric video with synchronized IMU and depth data, manipulation task demonstrations with annotated intent and object state transitions, and sim-to-real transfer benchmarks that bridge structured simulation outputs to real-world domain adaptation pipelines.

Our annotation workflows are purpose-aligned to world foundation model architectures — supporting Nvidia Cosmos-style physics-aware video generation and Meta V-JEPA 2-style latent-space predictive training — with reasoning traces that go beyond labeling what happened to capturing why it happened and what should happen next. These capabilities are grounded in research-backed methodologies and strategic partnerships with NVIDIA and UC San Diego and several other universities, helping move physical AI from research prototype to scalable deployment alongside people in real-world settings with high-fidelity real-world data.

We support physical AI development through a global network of robotics data factories, distributed data collectors, and operating businesses that generate diverse training signals from real environments. These pipelines capture the observational and behavioral data needed to train and validate robots that must operate in unpredictable conditions outside the lab. Real-world data allows organizations to move beyond purely simulated environments and build physical AI systems that adapt, learn, and function reliably in the physical world.

References

Ye, S., et al. “DreamZero: World Action Models are Zero-shot Policies.” NVIDIA (2026). dreamzero0.github.io

Gao, S., Liang, W., et al. “DreamDojo: A Generalist Robot World Model from Large-Scale Human Videos.” arXiv:2602.06949 (2026). dreamdojo-world.github.io

NVIDIA Developer Blog. “How to Instantly Render Real-World Scenes in Interactive Simulation.” Omniverse NuRec / 3DGUT (2025). developer.nvidia.com/blog/how-to-instantly-render-real-world-scenes-in-interactive-simulation

Yip, M. "The robot will see you now: Foundation models are the path forward for autonomous robotic surgery." Science Robotics (July 2025).

Krishna, L. "GAZE: Governance-Aware pre-annotation for Zero-shot World Model Environments." arXiv:2510.14992 (October 2025).

Atar, S., et al. "Humanoids in Hospitals: A Technical Study of Humanoid Surrogates for Dexterous Medical Interventions." arXiv:2503.12725 (2025). surgie-humanoid.github.io

Khazatsky, A., et al. "DROID: A Large-Scale In-The-Wild Robot Manipulation Dataset." arXiv:2403.12945 (2024).

Brohan, A., et al. "RT-X: Open X-Embodiment Robotic Learning Datasets and RT-X Models." arXiv:2310.08864 (2023).

Li, C., et al. "BEHAVIOR-1K: A Human-Centered, Embodied AI Benchmark with 1,000 Everyday Activities and Realistic Simulation." CoRL (2024).

Dass, S., et al. "PATO: Policy Assisted TeleOperation for Scalable Robot Data Collection." arXiv:2212.04708 (2023).

Mandlekar, A., et al. "Scaling Robot Supervision to Hundreds of Hours with RoboTurk." RSS (2019).

Are your ready to get

modular

AI solutions delivered?

Connect data, models, and people — in one enterprise-ready platform.

Latest Insights

Connect with Centific

Updates from the frontier of AI data.

Receive updates on platform improvements, new workflows, evaluation capabilities, data quality enhancements, and best practices for enterprise AI teams.