12 min read time

AI Summary by Centific

Turn this article into insights

with AI-powered summaries

Topics

Centific AI Research Team

Ashi Jain

Kriti Banka

Manish Mehta

Naman Khandelwal

Parth Kulshreshtha

Sunil Kothari

AI models often appear more capable than they truly are because their performance is judged against benchmarks that abstract away how software is built inside real organizations. When performance is measured on isolated puzzles instead of real repositories, workflows, and constraints, models can appear production-ready while struggling in enterprise environments. Correcting this gap requires grounding both evaluation and development in real code and retaining human expertise where judgment and context matter.

Evaluating models against real enterprise code surfaces a second, more practical challenge. Even when models are tested in realistic development environments, general-purpose LLMs consistently fall short on domain-specific generation. Financial algorithms, bioinformatics pipelines, robotics control systems, and other specialized workloads demand an understanding of domain rules, standards, and edge cases that generic models do not reliably possess.

Addressing this gap requires more than prompt tuning; it calls for disciplined data curation, human-in-the-loop validation, and fine-tuning workflows designed for enterprise conditions.

Why domain specialization is necessary

General-purpose LLMs are optimized for breadth. They perform well across many tasks. But that generality becomes a limitation when enterprises need code that reflects industry rules, regulatory constraints, and hard-won institutional knowledge. In practice, syntactically correct code is often insufficient. What matters is whether the code aligns with domain logic, internal standards, and production requirements.

This mirrors the problem described in our earlier benchmark analysis. Just as puzzle-based benchmarks misrepresent real development, generic models misrepresent what enterprise-ready code generation actually entails.

Overview of the fine-tuning workflow

The workflow presented here outlines a production-ready system for collecting, curating, fine-tuning, and evaluating LLMs on domain-specific codebases. It is designed around three principles that align directly with our earlier findings:

Real enterprise data must replace synthetic abstractions

Human judgment must guide ambiguity, not be removed from the process

Evaluation must reflect how code is actually written, reviewed, and deployed

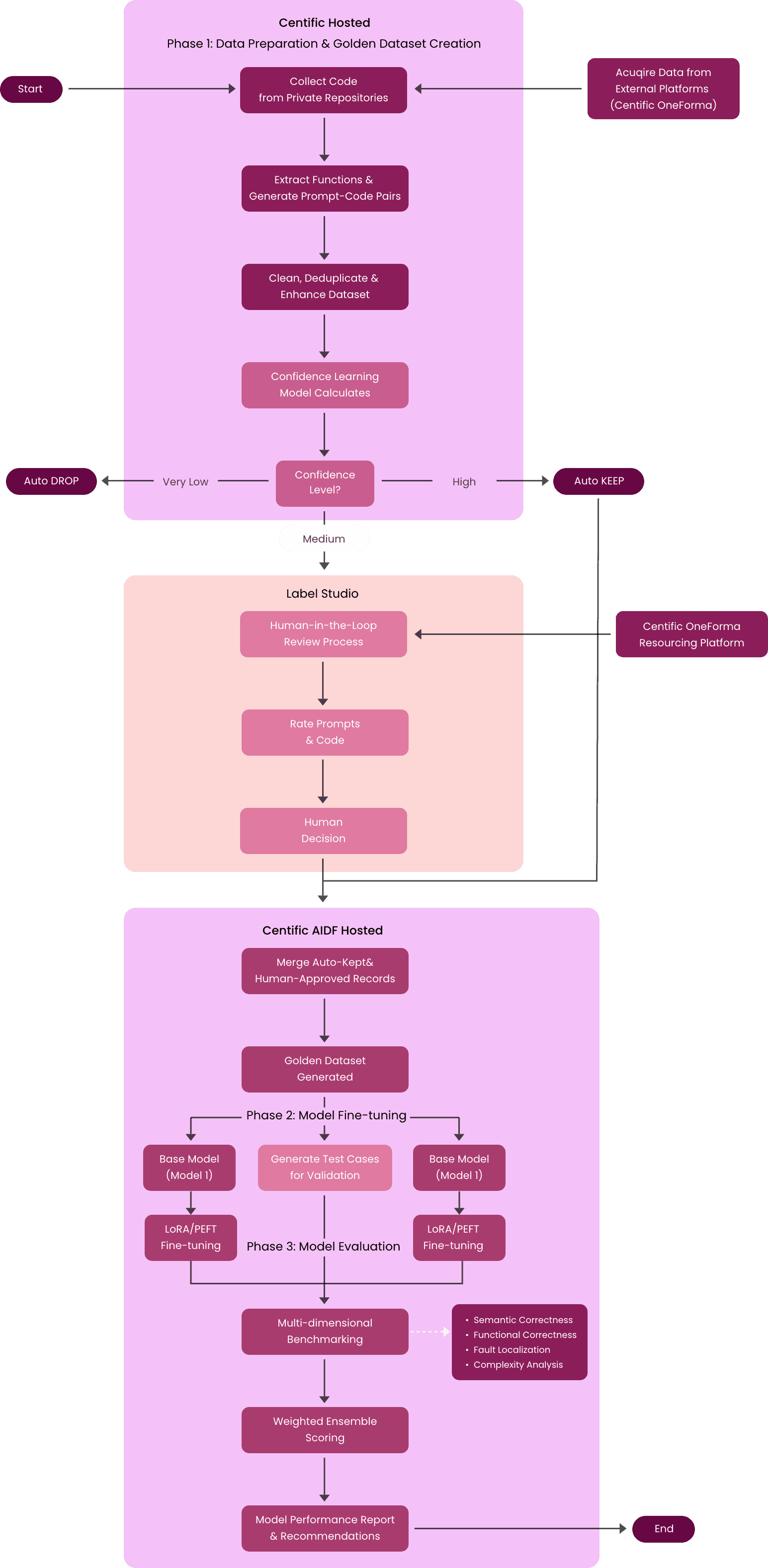

The figure above illustrates the core workflow for domain-specific code generation, focusing on data preparation, fine-tuning, and evaluation.

The three-phase fine-tuning architecture

Building domain-specific LLMs requires a workflow that mirrors how enterprise software is created, reviewed, and deployed. This architecture organizes that work into three connected phases, each addressing a distinct risk: poor data quality, shallow specialization, and misleading evaluation.

Phase 1: data preparation and golden dataset creation

The foundation of domain-specific performance is data that reflects real enterprise code, not synthetic examples. This phase focuses on assembling raw material from credible sources and shaping it into a dataset suitable for both training and evaluation.

Multi-source data collection

To capture the breadth and nuance of real-world development, data is drawn from multiple complementary sources rather than a single repository type.

Private repository mining: extract high-quality code from enterprise codebases with proper anonymization

External platform integration: rely on specialized data platforms (like Centific OneForma) for diverse code samples

Function extraction: parse repositories to identify and extract functions with their associated metadata

Prompt-code pair generation: create structured training examples linking natural language descriptions to code implementations

Raw collection alone is insufficient. Enterprise repositories contain noise, redundancy, and uneven quality that must be addressed before models ever see the data.

Quality control pipeline

This step introduces automated safeguards that narrow the dataset before any human effort is applied.

Data cleaning: automated deduplication, syntax validation, and noise removal processes

Dataset enhancement: standardize formatting, improve documentation, and enrich metadata

Confidence learning assessment: deploy machine learning models to score sample quality and difficulty

Three-tier quality classification

Very Low Confidence → Automatic rejection to eliminate poor-quality samples

High Confidence → Automatic inclusion for verified high-quality code

Medium Confidence → Human expert review through structured evaluation process

Automation reduces scale, but judgment is required where ambiguity remains. That is where human expertise becomes essential.

Human-in-the-Loop Validation

Human review is deliberately focused on the gray areas where automation cannot reliably determine quality or intent.

Expert review platform: integration with annotation tools (Label Studio) for systematic evaluation

Structured assessment: domain experts rate prompts for clarity and code for correctness

Quality scoring: comprehensive evaluation across multiple dimensions (functionality, readability, best practices)

Final decision gateway: human experts make keep, reject, or modify decisions for uncertain samples

Resource platform integration: scalable access to domain experts through specialized platforms

Once automated and human review converge, the remaining task is to assemble a dataset that is balanced, representative, and trusted.

Golden Dataset Assembly

This final step consolidates the output of earlier stages into a dataset suitable for enterprise-grade fine-tuning.

Merge validated samples: combine automatically approved and human-validated code samples

Distribution balancing: ensure representative coverage across different complexity levels and use cases

Final quality assurance: comprehensive review of the complete dataset before training begins

Phase 2: model fine-tuning

With a golden dataset in place, the focus shifts from data quality to controlled specialization. This phase injects domain knowledge into base models while preserving their general reasoning capabilities.

Parallel model training strategy

Training multiple variants in parallel allows teams to compare approaches rather than betting on a single configuration.

Multiple base models: train specialized variants simultaneously (Model 1, Model 2) to compare approaches

LoRA/PEFT implementation: parameter-efficient fine-tuning to maintain general capabilities while adding domain expertise

Test case generation: create comprehensive validation suites for each model variant during training

Training monitoring: real-time performance tracking and early stopping mechanisms

Fine-tuning alone does not guarantee readiness. Specialized models must still be evaluated against realistic criteria that reflect enterprise use.

Phase 3: model evaluation

Evaluation determines whether specialization translates into practical reliability. This phase moves beyond single metrics to assess performance across dimensions that matter in real development environments.

Multi-dimensional benchmarking

Each model is evaluated against multiple lenses to capture correctness, robustness, and maintainability.

Semantic correctness: evaluate whether generated code matches intended functionality and business logic

Functional correctness: test code execution, compilation success, and runtime behavior

Fault localization: systematic identification and categorization of error patterns

Complexity analysis: assessment using established metrics (Cyclomatic, Halstead, LOC)

Because no single metric captures enterprise readiness, results must be synthesized into a coherent signal.

Weighted ensemble scoring

Scores are combined in a way that reflects domain priorities rather than abstract benchmark conventions.

Composite metrics: combine multiple evaluation dimensions into unified quality scores

Domain-specific weighting: adjust metric importance based on business priorities and use case requirements

Performance comparison: direct benchmarking between model variants and baseline systems

The final step ensures that evaluation leads to actionable decisions, not just reports.

Final assessment

Results are translated into guidance that supports deployment and iteration.

Model performance report: comprehensive analysis of strengths, weaknesses, and deployment readiness

Recommendations: data-driven guidance for model selection and deployment strategies

Success criteria validation: confirmation that models meet predefined quality and performance thresholds

These outputs turn evaluation into a decision-making tool rather than a retrospective scorecard. They help teams determine not only which model performs best, but whether a model is ready to be trusted in real production environments.

Real-world use case: CodeCraft enterprise

To understand why domain-specific fine-tuning matters in practice, consider a hypothetical AI company, CodeCraft Enterprise, which offers a general-purpose code generation model to enterprise customers.

Despite strong benchmark performance, CodeCraft’s customers begin requesting capabilities that the model struggles to deliver reliably:

Financial services teams ask for Monte Carlo simulations that comply with internal risk models and regulatory constraints

Biotechnology teams need bioinformatics pipelines aligned with experimental protocols and lab-specific data formats

Manufacturing customers request PLC or robotics control logic with embedded safety interlocks

Aerospace teams require control algorithms compatible with proprietary simulation environments

In each case, the model produces syntactically correct code that fails under real conditions. The failures are not cosmetic. They stem from missing domain rules, incorrect assumptions about dependencies, and an inability to reason about context that never appears in public code.

CodeCraft’s leadership realizes that prompt engineering cannot close this gap. The problem is not how users ask for code; it is what the model has learned to recognize as “correct.”

Codecraft’s response

Rather than attempting to train a single model to cover every domain, CodeCraft adopts a specialization strategy:

Finance-focused models fine-tuned on quantitative libraries and internal risk patterns

Life-sciences models trained on validated pipelines and experimental workflows

Industrial models optimized for control systems, safety constraints, and embedded environments

Each model is trained using curated private repositories, filtered through quality gates, deduplicated, and reviewed by domain experts before fine-tuning.

Business impact for CodeCraft

As a result:

CodeCraft introduces premium API tiers priced above the general model

Enterprise contracts expand due to increased trust and lower failure rates

The company shifts from “general coding assistant” positioning to “domain-ready engineering systems”

As this example illustrates, domain specialization is not a marginal improvement, but a structural requirement for enterprise-grade code generation.

Technical deep dive: key workflow components

The fine-tuning workflow relies on a set of tightly integrated systems that manage data quality, validation, and evaluation at scale. Each component addresses a specific failure mode that emerges when models are trained on real enterprise code rather than synthetic or public datasets.

1. Confidence learning system

Large enterprise codebases contain wide variability in quality, completeness, and suitability for training. Rather than relying on binary inclusion rules, the workflow uses confidence learning to estimate how trustworthy each code sample is before it enters the training or evaluation pipeline.

The workflow employs a sophisticated confidence estimation approach:

Ensemble predictions: multiple models evaluate each code sample independently

Variance calculation: measure disagreement between model predictions

Entropy analysis: quantify uncertainty in prediction distribution

Confidence scoring: combine variance and entropy into unified confidence metric

Threshold-based categorization:

Below 0.3: auto-drop (low quality)

0.3-0.7: human review required (uncertain)

Above 0.7: auto-keep (high confidence)

Quality control: systematic filtering based on statistical confidence measures

This system allows automation to handle the majority of obvious cases while reserving human expertise for ambiguous or high-risk samples, keeping both quality and scalability in balance.

2. Test generation and validation

Even high-quality code samples provide limited value if their behavior cannot be validated. Because enterprise repositories often lack runnable or complete test suites, the workflow treats test discovery and generation as a first-class capability rather than an optional enhancement.

Automated test generation ensures functional correctness:

Function analysis: extract function signatures and analyze dependencies

Test category generation:

Unit tests: basic functionality validation for individual functions

Edge cases: boundary conditions, null inputs, extreme values

Integration tests: cross-module compatibility and data flow validation

Performance tests: execution time and resource usage benchmarks

Metadata integration: use function documentation for context-aware test creation

Comprehensive coverage: ensure all code paths and scenarios are tested

Automated validation: execute tests to verify code correctness before deployment

The workflow reduces false confidence and surfaces failures that only appear under realistic execution conditions by grounding validation in executable tests rather than static heuristics alone.

3. Multi-metric evaluation system

No single metric can capture whether a domain-specialized model is genuinely improving. The workflow therefore applies a layered evaluation strategy that measures behavior before and after fine-tuning, across correctness, quality, and real-world usability.

The workflow incorporates multiple evaluation approaches across two critical phases:

Pre-fine-tuning benchmarking (baseline establishment)

Before any specialization occurs, teams need a clear picture of how a general-purpose model behaves when confronted with real, domain-specific code. This baseline establishes the reference point against which all subsequent improvements are measured, and it helps distinguish genuine domain gaps from broader model limitations.

Domain-specific metrics: establish baseline performance on target domain tasks

Code quality assessment: measure compilation success rates, syntax correctness, and style compliance

Functional correctness: test execution success on domain-specific problem sets

Semantic understanding: evaluate code logic alignment with problem requirements

Performance profiling: benchmark inference speed, memory usage, and resource efficiency

Comparative analysis: compare against existing domain-specific tools and general-purpose models

Edge case handling: document baseline performance on boundary conditions and error scenarios

Grounding evaluation in this baseline helps teams avoid guessing where fine-tuning is needed. They can focus effort on the specific failure modes that matter most within their domain and operating constraints.

Post-fine-tuning evaluation (improvement measurement)

After fine-tuning, evaluation shifts to verifying how the model’s behavior has changed under the same conditions used to establish the baseline. This phase examines whether specialization improves domain performance while preserving reliability across adjacent tasks.

Performance Comparison: direct comparison with pre-training baseline metrics

Domain Improvement Analysis: quantify enhancement in specialized knowledge areas

Quality Metrics: measure improvements in code correctness, compilation success, and best practices adherence

Regression Testing: ensure general capabilities haven't degraded during specialization

A/B testing framework: compare fine-tuned model against baseline on real-world tasks

User satisfaction scoring: collect feedback from domain experts on code quality and usefulness

Deployment readiness assessment: validate model meets production performance standards

Results from this phase inform whether a model is suitable for production use, requires additional iteration, or should remain constrained to limited internal workflows.

Shared benchmarking methodologies across evaluation phases

The evaluation signals used before and after fine-tuning are generated through a common set of benchmarking methods. These methods provide consistency across both phases, allowing changes in model behavior to be attributed to fine-tuning rather than shifts in measurement criteria.

Functional correctness: tests execute successfully with expected outputs

Semantic correctness: code logic matches intended behavior and domain conventions

Taxonomy-guided fault localization: systematic error categorization and pattern analysis

Complexity analysis: evaluation using Cyclomatic complexity, Halstead metrics, and Lines of Code measures

Domain compliance: Adherence to industry standards, security practices, and regulatory requirements

Weighted ensemble scoring: Combined metrics weighted by complexity measures for holistic evaluation

These benchmarking methods remain constant across both evaluation phases, which keeps measurement stable while allowing model behavior to change. Consistent signals make it possible to observe real improvements, surface regressions early, and understand tradeoffs between correctness, complexity, and domain compliance without distorting results through shifting criteria.

From general capability to enterprise readiness

This fine-tuning workflow marks a move away from general-purpose code generation toward systems built for enterprise realities. Systematic collection, curation, and fine-tuning on domain-specific code allow LLM providers to:

Command premium pricing: specialized expertise supports 3–5x higher rates

Build deeper moats: domain knowledge creates durable competitive advantage

Enable new use cases: specialized models support applications out of reach for general systems

Establish enterprise trust: rigorous validation increases confidence in mission-critical environments

Organizations that adopt this approach early position themselves to lead in high-value enterprise segments, while reliance on undifferentiated, general-purpose models increases the risk of commoditization. For foundational LLM providers, sustained differentiation will depend on pairing broad model capabilities with domain-specific depth, supported by workflows that reflect how software is actually built and maintained.

How Centific helps

Centific helps organizations operationalize this transition through its AI Data Foundry, a foundation designed to work directly with private repositories, human expertise, and enterprise governance requirements. The platform supports golden dataset creation, domain-aware fine-tuning, and evaluation workflows that mirror real development conditions rather than synthetic benchmarks.

As a result, enterprises gain earlier insight into where models hold up, where guardrails are required, and where AI assistance can be applied safely to high-value systems without introducing hidden risk.

Are your ready to get

modular

AI solutions delivered?

Connect data, models, and people — in one enterprise-ready platform.

Latest Insights

Connect with Centific

Updates from the frontier of AI data.

Receive updates on platform improvements, new workflows, evaluation capabilities, data quality enhancements, and best practices for enterprise AI teams.