16 min read time

AI Summary by Centific

Turn this article into insights

with AI-powered summaries

Topics

Ahmed Abdellah

Alex Cho

Emily Shen

Kriti Banka

Leela Krishna

Mangesh Damra

Every robotics team has heard this story:

Months of work go into building a simulation environment — physics engines tuned to perfection; domain randomization cranked up, reward functions carefully shaped.

The policy achieves near-perfect task success in sim.

Then it’s transferred to real hardware.

The robot drops the object on the first try.

We measured this pattern directly at Centific. We built a Dexterous Manipulation Benchmark using simulated multi-fingered hands (16 degrees of freedom, 4 articulated fingers), then compared it against over 1,400 real teleoperated episodes from Unitree’s G1 humanoid robot across 29 task datasets — stacking blocks, pouring liquids, folding towels, cleaning tables, organizing tools, packaging cameras, and more.

1,400+ real episodes: the dataset that exposed the gap

Unitree Robotics, the manufacturer of the G1 humanoid, published a large-scale teleoperation dataset (DiverseManip) on HuggingFace, collected by human operators wearing VR headsets and controlling the G1’s arms and grippers in real time. This dataset spans single-arm and dual-arm tasks across three different end-effectors:

End-Effector | DOF | Type | Tasks Covered |

Unitree Dex1 | 1-DOF | Simple parallel gripper (open/close) | Towel folding, table cleaning, tool organizing, block stacking |

Unitree Dex3 | 28-DOF | Multi-fingered dexterous hand | Block stacking, pouring, camera packaging, object placement |

BrainCo Revo 2 | 6-DOF per hand | Anthropomorphic dexterous hand | Rubik's cube grasping, Oreo pickup, precision tasks |

This dataset gave us the real-world ground truth to benchmark against simulation. But it also revealed a critical limitation: the data was collected using standard VR controllers with basic IK retargeting. This method systematically destroys the most valuable signals in teleoperation data. This limitation is exactly what N1 Robotics’ neural retargeting technology is designed to solve.

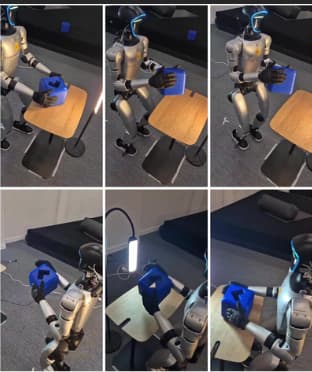

Figure 1: Unitree G1 dexterous manipulation — dual-arm robot performing Rubik's Cube grasping and precision object manipulation tasks across the three end-effector configurations used in Centific’s benchmark.

Figure 2: Unitree G1 humanoid robot performing block manipulation tasks — the same robot platform used across all 29 datasets in Centific’s B4 Dexterous Manipulation Benchmark.

The numbers that opened our eyes

We measured four core metrics across simulation and real-world teleoperation. The results were unambiguous:

Metric | What it measures | Simulation | Real teleoperation | Gap |

Task Success Rate | Did the robot complete the task? | ~95% | ~83% | ~1.1x |

Manipulation Accuracy | How precise was object placement? | ~99% | ~68% | ~1.5x |

Grasp Quality | Was the grasp stable and functional? | ~68% | ~47% | ~1.5x |

Grasp Adaptiveness | Did the robot adjust mid-task? | ~100% | ~2% | ~50x |

Across these metrics, simulation consistently reports near-perfect performance, while real-world results diverge by 1.5x to over 50x on the measures that determine whether a task succeeds in deployment.

The largest difference appears in grasp adaptiveness. Simulation reports constant adjustment, while real operators adapt roughly 2 out of every 100 frames. That result is not a weakness; it reflects how operators anticipate failure before it occurs, using wrist orientation, approach angle, and timing. A policy trained only in simulation does not learn those anticipatory strategies. Recent work from NVIDIA points in a similar direction. In introducing the CaP-X framework, Jim Fan, NVIDIA Director of AI & Distinguished Scientist, describes API-driven robotic systems in which perception, planning, and control are composed at runtime, allowing robots to solve certain manipulation tasks zero-shot without task-specific policy training. This approach shifts part of the problem from learning behavior in advance to assembling it during execution, reinforcing the limits of training-only approaches.

When expanded our analysis across all 29 datasets spanning three different end-effectors (Dex1, Dex3, and BrainCo Revo 2 hands), the strongest dataset showed simulation overestimating grasp adaptiveness by over 80x. Across the full benchmark, 26 out of 29 datasets passed all quality gates, confirming the robustness of our methodology.

Why teleoperation teaches what simulation cannot

These results point to a broader issue: simulation captures outcomes, but not how those outcomes are achieved. When a human teleoperates a robot through a manipulation task, like picking up a slippery bottle, threading a cable, handing a tool to a colleague, they capture details that simulation does not reproduce: the physics of contact as experienced through imperfect sensing and actuation.

Real contact is messy, and that’s the point

In simulation, when a fingertip contacts an object, the force is modeled as a clean vector computed from a friction cone. In reality, the fingertip deforms, surfaces vary at a micro level, and sensors introduce noise, drift, and delay. The operator responds to these conditions by increasing pressure or adjusting wrist orientation to stabilize the grasp.

Our benchmark showed this clearly: simulated grasps scored higher by the simulator’s own metric, but real teleoperated grasps, though “messier,” were perfectly functional. The teleoperator learned to work with imperfections. A sim-trained policy optimized for the wrong objective would fail.

Recovery is the most valuable signal

The most important moments in any manipulation episode are the failures: the fumbled pickup, the object sliding mid-transfer, the awkward re-grasp. The most important moments in any manipulation episode are the failures: the fumbled pickup, the object sliding mid-transfer, the awkward re-grasp. In simulation, failures are rare. In real tasks, recovery happens frequently and provides useful training data. In our teleoperation dataset, nearly one in four episodes included at least one grasp-regrasp cycle. Every one of those is a lesson simulation cannot provide.

In our teleoperation dataset, nearly one in four episodes included at least one grasp-regrasp cycle. Every one of those is a lesson simulation cannot provide.

Teleoperation is grounded in physical reality

Two of our benchmark task types, in-hand rotation and self-correction via finger gaiting, had zero matching episodes in the real dataset. Why? Not because the robot couldn’t attempt them, but because a simple gripper physically cannot rotate an object in-hand using finger gaiting. Simulation lets you define any task; reality constrains which tasks are possible with a given end-effector. Teleoperation data is honest. It contains only what the hardware can actually do.

These results show that teleoperation data is not a substitute for simulation, but a reference point for it. Simulation approximates task outcomes, but it misses the contact dynamics that determine real-world success, often by an order of magnitude on key metrics.

What Centific built: the most comprehensive dexterity benchmark in the industry

To quantify this mismatch, Centific built a benchmark that compares simulation and real-world performance directly. The benchmark measures how large the difference is, where it appears, and what drives it across tasks, datasets, and end-effectors.

A benchmark engineered from the ground up

Centific’s Robotics & Physical AI team designed and executed the B4 Dexterous Manipulation Benchmark spanning 29 real-world task datasets, over 1,400 teleoperated episodes, three different dexterous end-effectors (Unitree Dex1, Dex3, and BrainCo Revo 2), and a simulated Allegro hand baseline. This experiment covered:

Household tasks (folding towels, cleaning tables).

Precision tasks (pouring medicine, mounting cameras).

Logistics tasks (packing boxes, organizing tools).

Bimanual coordination (dual-arm cleaning, dishwasher loading).

No one in the industry had systematically measured the sim-to-real dexterity gap across so many tasks, end-effectors, and episodes. Centific’s benchmark is the first to put hard numbers on what the field has long suspected.

Centific’s technical capabilities

Building this benchmark required deep robotics engineering across multiple disciplines. Here is what the Centific team designed, built, and validated:

Capability | What Centific built | Why it matters |

4-Metric Evaluation Framework | TSR, DMS, GQS, GAR — four complementary metrics with pass/fail gates | Industry-first standardized way to measure dexterity quality |

29-Dataset Benchmark Pipeline | Ingest HuggingFace datasets, extract joint trajectories, compute metrics, generate reports | Reproducible, scalable evaluation across any teleoperation dataset |

IK Retargeting Engine | Cross-embodiment retargeting that normalizes Dex1/Dex3/BrainCo data to a common 16-DOF Allegro joint space | Enables fair comparison across different robot hands — revealed the 50x to 1.6x insight |

Neural Retargeting Architecture | Production-ready infrastructure for ML-based retargeting (WaldoRT-compatible) | Ready to integrate with n1 Robotics or any neural retargeting model |

Sim-to-Real Fine-tuning Pipeline | Alpha-annealing data mixer, asymmetric actor-critic (SAC), dual replay buffer with cross-trial recycling | Implements latest RL research for stable real-world policy fine-tuning |

Multi-Domain Benchmark Framework | 4 benchmark domains: Airport Contact (B1), PCB Assembly (B2), Egocentric Transfer (B3), Dexterous Manipulation (B4) | Extensible architecture — new domains plug in without breaking existing ones |

NVIDIA Cosmos Reason 2 Integration | Verification and error-correction pipeline powered by Cosmos Reason 2 video understanding | AI-powered quality assurance for robotic manipulation episodes |

The insight only this benchmark could reveal

The most important finding from Centific’s benchmark was not the ~50x gap itself. It was what happened when we applied retargeting: the gap collapsed from ~50x to under 2x. This proved that the massive raw gap was largely a measurement artifact caused by comparing 1-DOF grippers against 16-DOF simulated fingers. The true dexterity gap is much smaller, but it can only be seen through proper retargeting.

This result shows what retargeting changes. When joint spaces are aligned, the observed difference reflects actual behavior rather than differences in embodiment. It also shows that teleoperated data contains more useful information than raw comparisons suggest, because human operators encode stable grasp strategies before failure occurs.

This insight has direct commercial implications: it means the problem is solvable. With the right retargeting technology, like N1 Robotics’ WaldoRT, the remaining gap can be closed with far less data than anyone previously assumed. Centific's benchmark is the evidence base that makes this case quantitatively.

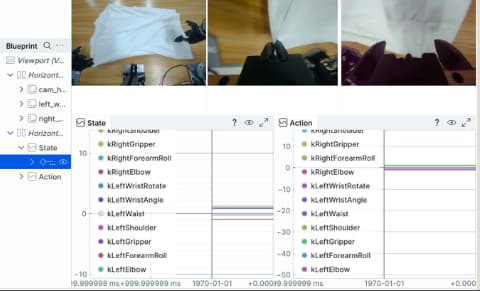

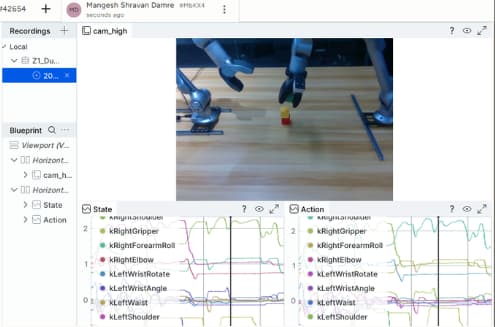

Figure 3: Centific’s data collection pipeline — robot joint state recordings showing State and Action telemetry across manipulation tasks in the B4 benchmark.

Figure 4: Centific benchmark episode — robot arm performing block manipulation task with real-time joint trajectory capture, part of Centific's 29-dataset evaluation framework.

The teleoperation bottleneck: why collecting data is still too slow

If teleoperation data is widely valuable, why isn’t everyone collecting it at scale? Because the current process is painfully slow.

Challenge | Industry Reality |

Setup time | 2-3 weeks of custom engineering to integrate XR trackers with a new robot |

Collection speed | ~50 episodes per hour after accounting for resets and operator fatigue |

Retargeting | Linear IK mapping that destroys the grasp intent the operator was trying to convey |

Cross-embodiment | Every new robot hand requires a custom retargeting pipeline from scratch |

The industry standard is linear Inverse Kinematics (IK) retargeting — mapping human joint angles to robot joint angles through geometric correspondence. This sounds reasonable, but it systematically destroys exactly the signals that make teleoperation data valuable.

When a human pinches an object between thumb and index finger, the IK mapper sees two joint angles. It maps them independently to the robot’s two corresponding joints. But the pinch isn’t about individual joint angles; it’s about the coordinated closure that creates force closure on the object. Linear IK strips away this coordination, the grasp intent, and the precision geometry. What remains is a lossy approximation that requires many more demonstrations to learn the same policy.

Neural retargeting: the breakthrough that changes everything

Here is where the pieces connect. Centific’s benchmark used Unitree’s G1 robot dataset, collected with VR headsets and basic IK retargeting. We found massive gaps, especially in grasp adaptiveness. What if the same Unitree G1 data was collected with a system that preserves grasp intent instead of destroying it?

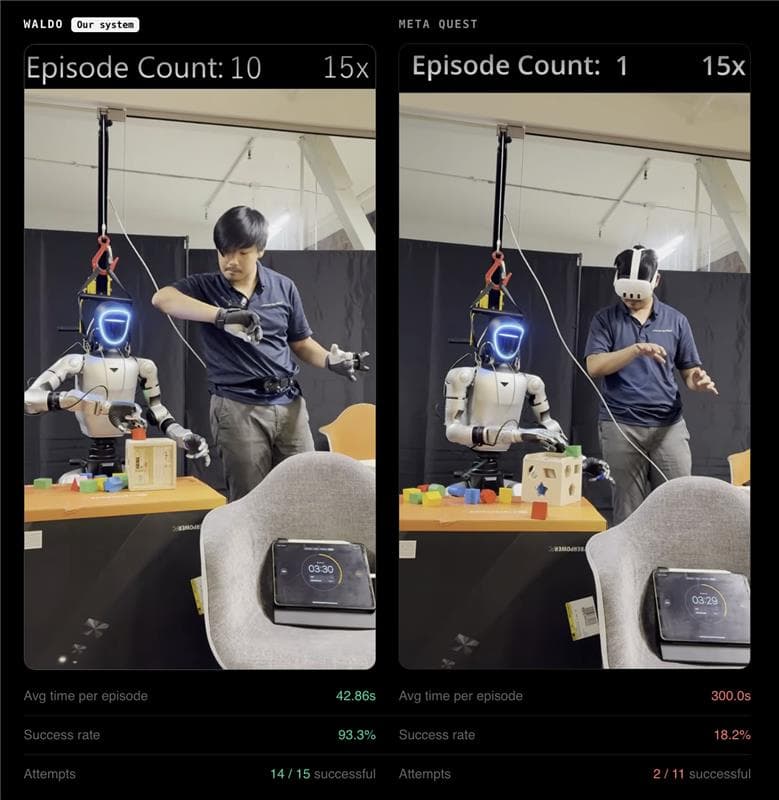

That system exists. N1 Robotics’ Waldo is built for the same Unitree G1 humanoid that our benchmark is based on. But replaces the VR + IK retargeting pipeline with neural retargeting that faithfully captures what the human operator intended.

Same robot, better data: Waldo on the Unitree G1

Waldo is a complete dexterous teleoperation platform designed specifically for the Unitree G1 humanoid (29-DOF) with BrainCo Revo 2 hands (6-DOF per hand), the same robot and one of the same end-effectors in our benchmark. It includes finger-tracking gloves with EMF sensors, Vive trackers for 6-DOF wrist tracking, a pre-configured inference PC, and software that handles calibration, recording, and export out of the box.

Capability | Industry baseline | Waldo |

Setup time | 2-3 weeks | 15 minutes |

Collection speed | ~50 episodes/hr | ~200 episodes/hr |

End-to-end latency | Variable, often >200ms | <100ms |

Retargeting method | Linear IK (lossy) | WaldoRT neural mapping (preserves intent) |

Using Waldo for teleoperation results in a 4x speedup in data collection and a 100x reduction in setup time. For example, Unitree’s DiverseManip dataset, the same dataset Centific benchmarked, took an estimated 20 hours to collect using VR headsets. With Waldo on the same G1 robot, an equivalent dataset can be collected in roughly 5 hours, with higher fidelity per episode due to neural retargeting.

Figure 5: Waldo vs Meta Quest — side-by-side comparison. Waldo (left): Episode Count 4, Avg 42.86s per episode, 93.3% success rate, 14/15 successful. Meta Quest (right): Episode Count 1, Avg 300s per episode, 18.2% success rate, 2/11 successful. Same robot, same task, same time window.

Neural retargeting in action

Neural retargeting is the mechanism that makes teleoperation data usable for training.

Figure 6: WaldoRT Neural Retargeting in action — human hand pose (left) mapped to robot hand pose (right) using neural network inference at 1 kHz. The model preserves finger coordination and grasp intent that IK mapping destroys. This is the core technology that collapses the 50× gap to under 2×.

This figure shows how neural retargeting maps human hand motion to robot control in real time. The mapping preserves coordination across fingers and maintains grasp intent during execution.

N1 Robotics partnership: what this means for Centific

Centific is partnering with N1 Robotics to bring Waldo into our large-scale data collection pipeline. Key details from our partnership:

WaldoRT runs inference at 1 kHz: lightweight, fast, and scales identically from 6-DOF to higher DOF hands with zero latency increase

Setup takes under 30 seconds: push a pedal and data collection begins immediately

Time between episodes can be as short as 5 seconds, enabling rapid rinse-and-repeat collection loops

Inference PC ships pre-configured with an NVIDIA GPU, which is compatible with Centific’s Jetson Thor infrastructure

Multi-operator profiles are pre-saved: operators clock in ready to collect without hand retraining

Enterprise plan includes 100 hours per month of N1 Robotics managed operator time on Centific’s systems

Custom integration builds available for additional end-effectors and robot embodiments beyond humanoids

These capabilities make it possible to collect high-fidelity manipulation data quickly and consistently across operators, tasks, and robot configurations.

CaP-X: when frontier LLMs become robot controllers

A parallel development is reshaping how we think about robot control entirely. CaP-X (Coding as Policies), available at capgym.github.io, demonstrates that frontier large language models can control robots, without any robot-specific training, simply by writing executable control code from natural language task descriptions.

CaP-Agent0: zero-shot manipulation

CaP-Agent0 is a training-free coding agent evaluated across 100+ manipulation tasks spanning LIBERO-PRO, Robosuite, and BEHAVIOR. The findings:

Frontier models achieved over 30% average zero-shot success on manipulation, with no task-specific training

On LIBERO-PRO with position and instruction perturbations, state-of-the-art VLAs like OpenVLA and pi0 scored 0% across the board

Even the best VLA (pi0.5) reached only 13% average success on perturbed tasks

CaP-Agent0 reached 18% (better than the best VLA) without any training

These results show that training alone does not guarantee robustness, and that alternative approaches can outperform learned policies even without task-specific optimization.

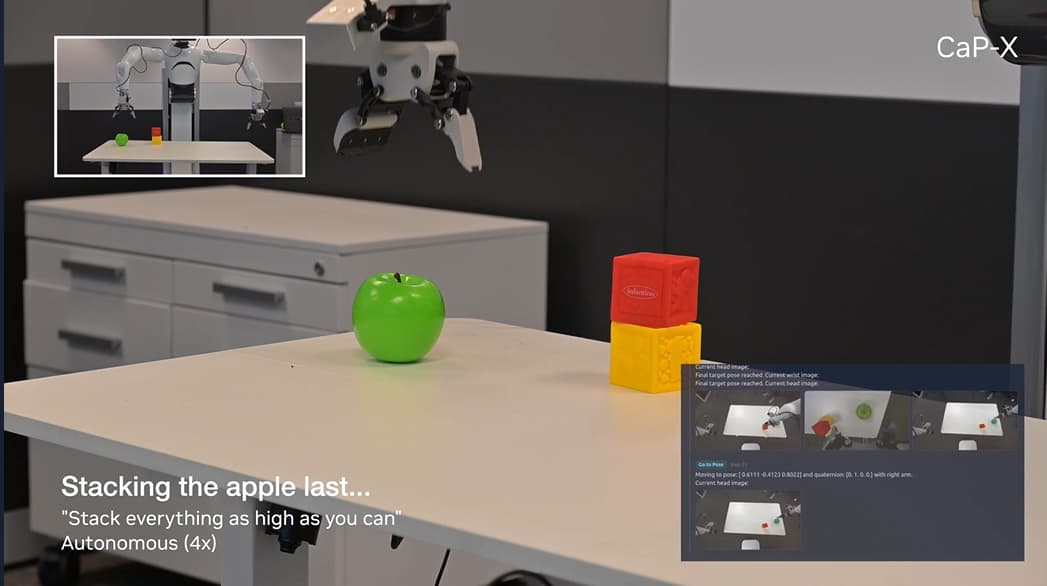

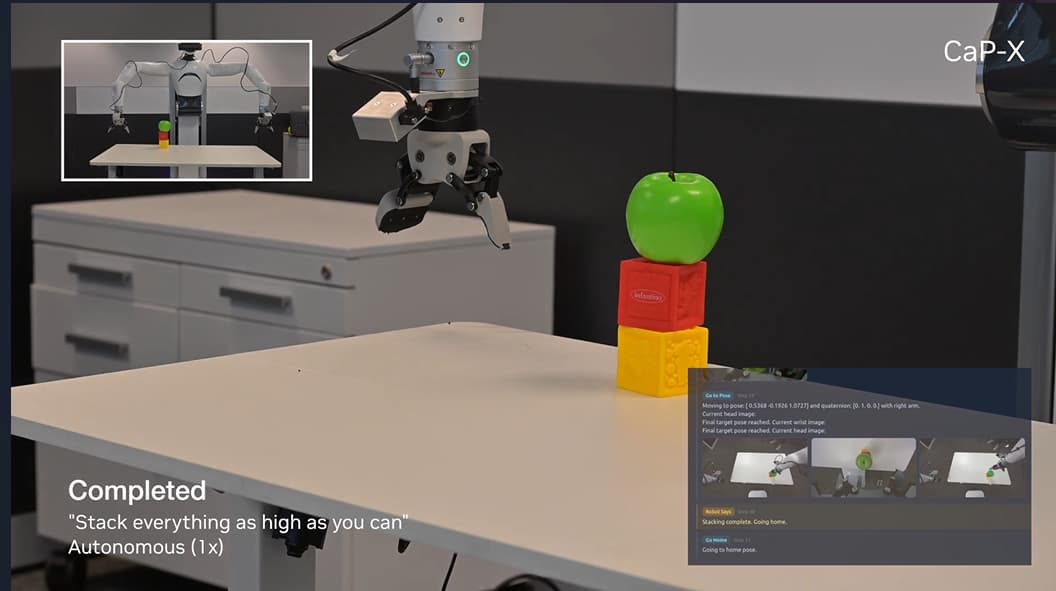

Figure 7: CaP-Agent0 in action: robot recognizes the affordance of stacking order, placing cubes before the round apple. The instruction: “Stack everything as high as you can.” Autonomous execution (4x speed). Source: capgym.github.io

Figure 8: CaP-Agent0 task completed. Cubes stacked with apple on top, demonstrating embodied reasoning about object geometry without any task-specific training. Source: capgym.github.io

CaP-RL: code-based post-training

CaP-RL applies reinforcement learning directly to the coding agent. A 7B-parameter model (Qwen 2.5 Coder) jumped from 20% to 72% average success in simulation after just 50 training iterations. The learned policies then transferred to a real Franka robot (84% on cube lifting, 76% on cube stacking) with minimal sim-to-real gap.

Why CaP-X matters for this pipeline

CaP-X addresses the task generalization layer, allowing robots to handle novel instructions without retraining. When CaP-Agent0 generates task code and WaldoRT-collected episodes provide the fine-grained manipulation signal, they cover complementary failure modes. CaP-X handles novel instructions and task reasoning; WaldoRT handles the physical contact fidelity that code alone cannot capture.

The complete pipeline: from simulation to deployment

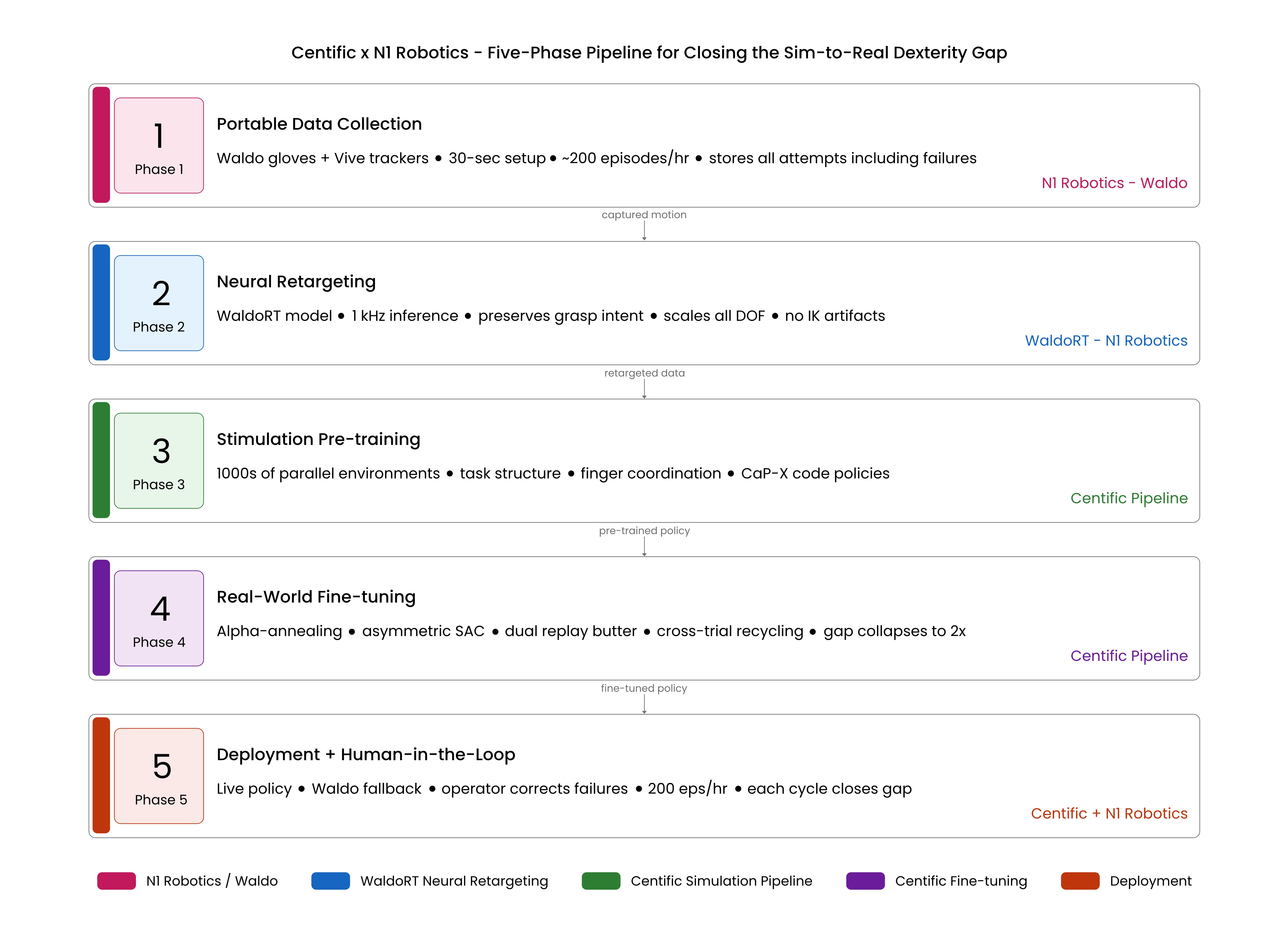

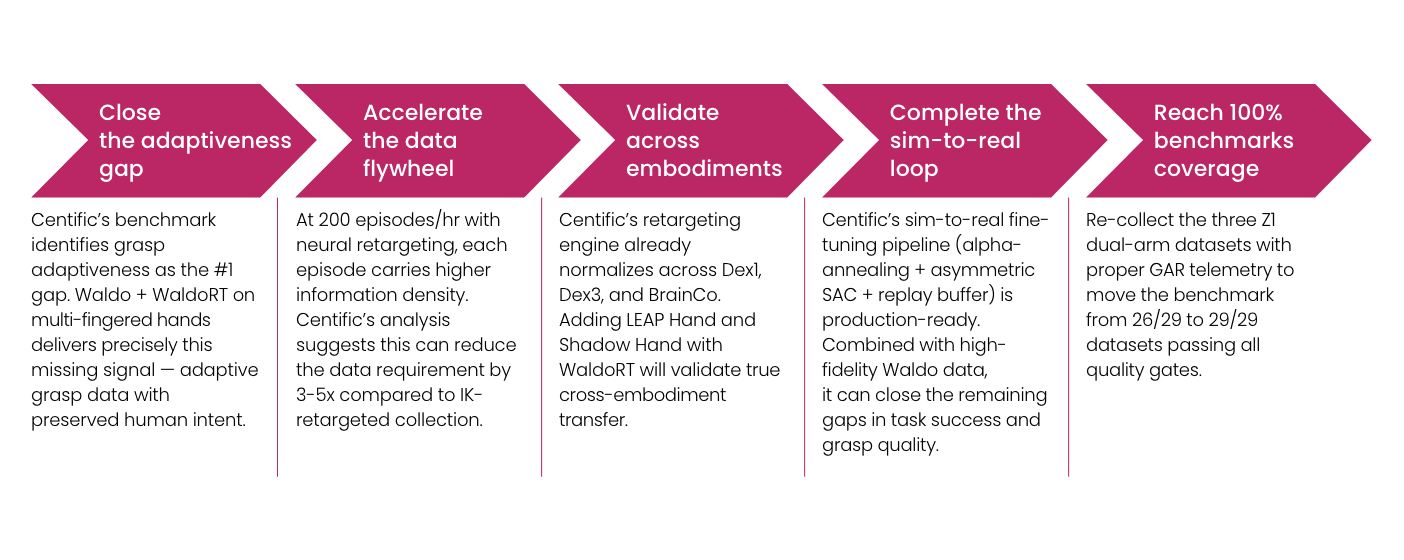

Our benchmark, combined with recent advances in data-efficient reinforcement learning, points to a five-phase architecture for closing the sim-to-real dexterity gap:

Figure 9: Five-phase pipeline for closing the sim-to-real dexterity gap — Phase 1: Portable Data Collection → Phase 2: Neural Retargeting (WaldoRT) → Phase 3: Simulation Pre-training → Phase 4: Real-World Fine-tuning → Phase 5: Deployment with Human-in-the-Loop Correction.

Phase 1: portable data collection

Capture human manipulation with wearable motion-capture rigs (finger tracking gloves + wrist trackers). No robot needed during collection. Approaches like DexCap (RSS 2024) achieve 3x the speed of traditional teleoperation at a fraction of the cost. Critically, store all attempts, including failures, because mixed-quality data provides better state-space coverage than expert-only demonstrations.

Phase 2: neural retargeting

Map human hand motions to robot hand commands using learned models like WaldoRT. This preserves grasp intent, force closure, and finger coordination at 1-2ms per frame, compatible across different end-effectors without custom engineering per robot.

Phase 3: simulation pre-training

Train policies in massively parallel simulation, with thousands of environments running simultaneously, to learn task structure such as approach trajectories, finger coordination patterns, and basic manipulation sequences. Our benchmark shows that simulation transfers task-level structure well, with an approximately 1.1x gap in success rate, even though contact-level details remain poor.

Phase 4: real-world fine-tuning

Fine-tune sim-pretrained policies on real teleoperation data using stabilized RL techniques: alpha-annealing data mixing (gradually shifting from sim to real data), asymmetric actor-critic updates, and warm-start episodes. Research shows these techniques prevent the catastrophic forgetting that typically destroys sim-pretrained policies during real-world fine-tuning.

Phase 5: deployment + human-in-the-loop correction

Deploy the fine-tuned policy with live Waldo teleoperation for human-in-the-loop correction. When the policy fails, the operator takes over, generating the hardest and most valuable training data. At 200 episodes per hour, correction data accumulates rapidly. Each cycle closes the gap further.

Centific + neural retargeting: the roadmap

Centific’s benchmark provides evidence of where simulation falls short and how large the gap remains in real-world performance. The five-phase pipeline defines the architecture. Neural retargeting platforms like n1 Robotics’ Waldo provide the tooling. Together, they form a concrete roadmap for building the data infrastructure that next-generation robotics demands.

Why this matters for foundation models

The next frontier in robotics is dexterous foundation models: vision-language-action (VLA) models that can reason about finger placement, contact forces, and in-hand manipulation from natural language instructions. Models like RT-2, Octo, and pi0 have shown this is possible for simple grippers. But extending to multi-fingered dexterity requires millions of teleoperated episodes with rich grasp data, which is the kind of data that Centific’s pipeline is designed to produce and that neural retargeting makes feasible to collect at scale.

The roadmap

Implications for Dexterous Manipulation Training

The gap between simulated and real-world dexterous manipulation is a fundamental measure of how much real-world contact physics matters and how much of that knowledge can only come from human-guided teleoperation.

Simulation gives you task structure. Teleoperation gives you contact truth. Neural retargeting ensures that contact truth is preserved when mapping human motion to robot control.

The implications extend beyond model performance. The constraint is not simulation quality alone, but the data used to train policies. Robotics companies that invest in teleoperation infrastructure and collect high-fidelity manipulation data will have an advantage, because their models are trained on the contact behavior that determines real-world outcomes.

Dimension | Simulation | Teleoperation | Winner |

Task success | Higher scores | Harder, but grounded in reality | Teleop |

Manipulation accuracy | Near-perfect | Imperfect but true | Teleop |

Grasp quality | Optimized for wrong metric | Messier, but functional | Teleop |

Grasp adaptiveness | Scripted, artificial | Anticipatory, human-intelligent | Teleop |

Recovery behaviors | Grasps don't fail in sim | 1 in 4 episodes includes recovery | Teleop |

Data fidelity | Perfect but wrong | Imperfect but true | Teleop |

Setup cost (with Waldo) | GPU cluster + months | ~$12K hardware + 15 min | Teleop |

Are your ready to get

modular

AI solutions delivered?

Connect data, models, and people — in one enterprise-ready platform.

Latest Insights

Connect with Centific

Updates from the frontier of AI data.

Receive updates on platform improvements, new workflows, evaluation capabilities, data quality enhancements, and best practices for enterprise AI teams.