6 min read time

AI Summary by Centific

Turn this article into insights

with AI-powered summaries

Topics

Centific AI Research Team

Abhishek Mukherji

Rudrashis Poddar

Tanushree Mitra

One Claude power user consumed $27,000 of compute in 23 days on a $200 Claude Max subscription. Now limits are tightening across the board. This exposure of the industry’s unsustainable “gym economics ” highlights a staggering 25× subscription-to-cost ratio, proving that frontier labs are currently subsidizing massive losses that business and individual customers have yet to fully reckon with.

We have entered the era of the reasoning model, but we’ve brought a brute force checkbook to the table. As AI product leaders, we’ve seen the internal telemetry: the industry is currently running on gym economics. Frontier labs like Anthropic and OpenAI are heavily subsidizing users, with a good number of power users on a $200/month plan racking up to $5,000 in actual API costs. This 25× subscription-to-cost ratio is a bubble waiting to burst.

The so-called token tax is a fundamental barrier to scaling agentic AI across four critical pillars:

Coding: iterative generation and test loops that burn tokens with every bug fix.

STEM: expert-level reasoning in Science (GPQA) and Math (MATH-500), where every step is a compute cost.

LLM Behavior: evaluating complex traits like political bias or sycophancy requires multi-turn rollouts that compound costs exponentially.

Red Teaming: massive adversarial safety rollouts that require deep, wordy reasoning to find edge cases, yet Red Teaming budgets are meager.

Centific’s deep dive: token utilization across the Bloom pipeline

We undertook a systematic investigation into token consumption patterns using Anthropic’s Bloom LLM Behavior Evals as a reference. This is a reasoning-heavy workload. The findings were economically significant. As agentic frameworks like Bloom chain multiple LLM calls together — understanding, ideation, rollout, and judgment — the compounding effect of token usage becomes dramatic.

Observed token costs: actual run data (10 Judgments)

Model | Cost Mode | Input Tokens | Output Tokens | Input $/M | Output $/M | Input Cost $ | Output Cost $ | Total Cost $ |

|---|---|---|---|---|---|---|---|---|

Sonnet 4 | Both | 939,205 | 149,634 | $3.00 | $15.00 | $2.82 | $2.24 | $5.06 |

OSS-120B | Both | 828,322 | 79,741 | $0.60 | $2.40 | $0.50 | $0.19 | $0.69 |

Key finding 1: judgment is the most expensive phase

The Judgment phase—summary, scoring, and justification—consumed more tokens than the Ideation phase, defying the initial assumption that creative scenario generation would be the costliest step. This occurs because the judge model must ingest entire rollout transcripts as input context, leading to massive input token accumulation through chained calls.

Key finding 2: input tokens cost way more than output tokens

Input token costs, despite being priced lower per million than output tokens, consistently exceeded output token costs in our runs because of the sheer volume of context passed through chained calls. For just 10 judgment cycles, actual token spend revealed that input costs dominated the total bill despite the pricing asymmetry.

The solution: token optimization

To address this token burden, the Centific AI Research team explored methods beyond prompt tuning. We implemented a two-pronged technical "pincer move" designed to maximize the Intelligence-per-Token ratio. Our experiments below show across the entire BLOOM pipeline.

Efficiency metrics: We benchmark every optimization against precise KPIs to ensure quality isn’t sacrificed for speed or cost:

Token Efficiency = F1/Token Usage

Cost Efficiency = F1/Total Cost

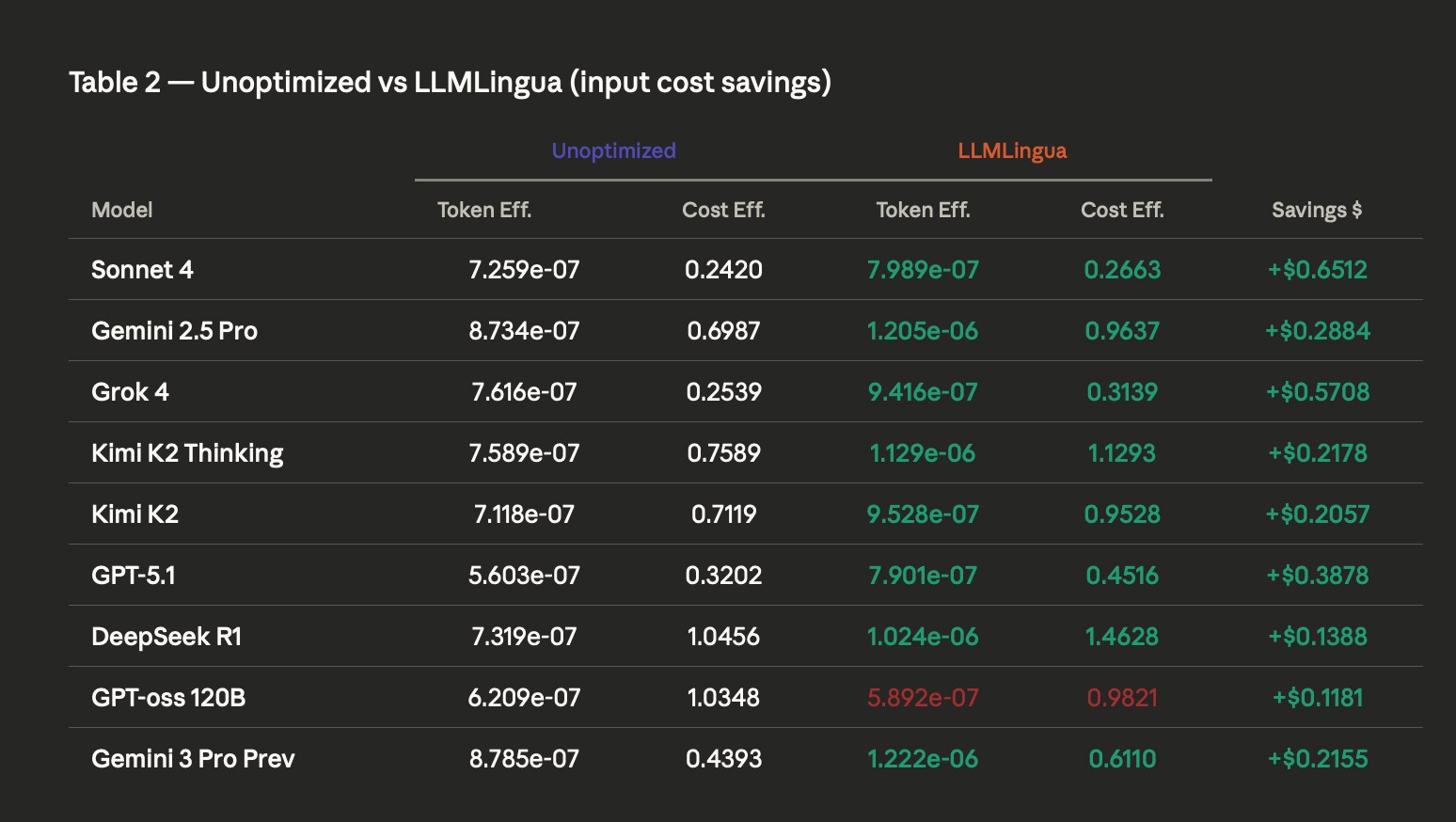

Prompt Compression with LLMLingua-2

Optimizing for different LLMs as-a-judge using LLMLingua prompt compression

For the reasoning-heavy “Judgment” phase, where token usage typically skyrockets compared to initial phases, we implemented LLMLingua-2 for high-fidelity prompt compression.

The mechanism: this approach utilizes entropy-based filtering to identify and preserve the most informative segments of a prompt while stripping away natural language redundancy.

Frontier benchmarks: in our evaluations, this technique improved input token efficiency for 8 out of 9 models.

The winners: Gemini-3-Pro achieved a 1.5X gain in token efficiency, while Kimi-K2 Thinking saw a 1.5X increase in cost efficiency.

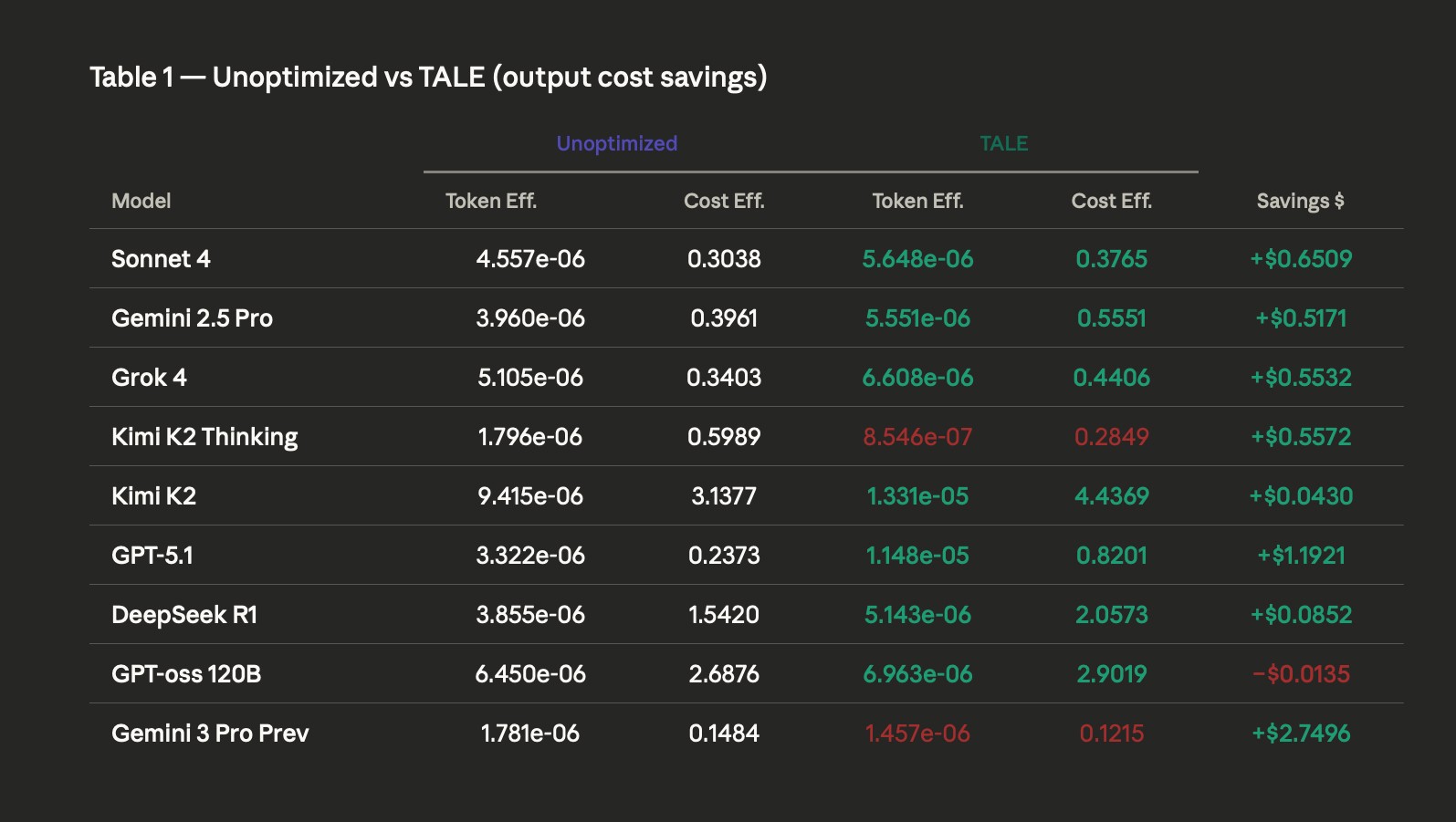

Output Budgeting with TALE-EP

Optimizing for different LLMs as-a-judge using TALE for output cost savings

We also applied TALE-EP (token-budget Allocation) for the judgment output.

The mechanism: TALE-EP dynamically manages the token budget using 1 extra lightweight API call to prevent the model from falling into expensive, verbose loops during complex tasks like coding or behavioral evaluation.

Frontier benchmarks: this strategy successfully improved output token efficiency for 7 out of 9 models.

The winners: GPT-5.1 was the standout performer, delivering 2.5X gains in token efficiency and 4X gains in cost efficiency.

The safety caveat: crucially, our benchmarks reveal that optimization is not universally safe. Models like Kimi K2 Thinking and Gemini 3 Pro Preview suffered catastrophic degradation under aggressive TALE optimization, proving that precision tuning is mandatory.

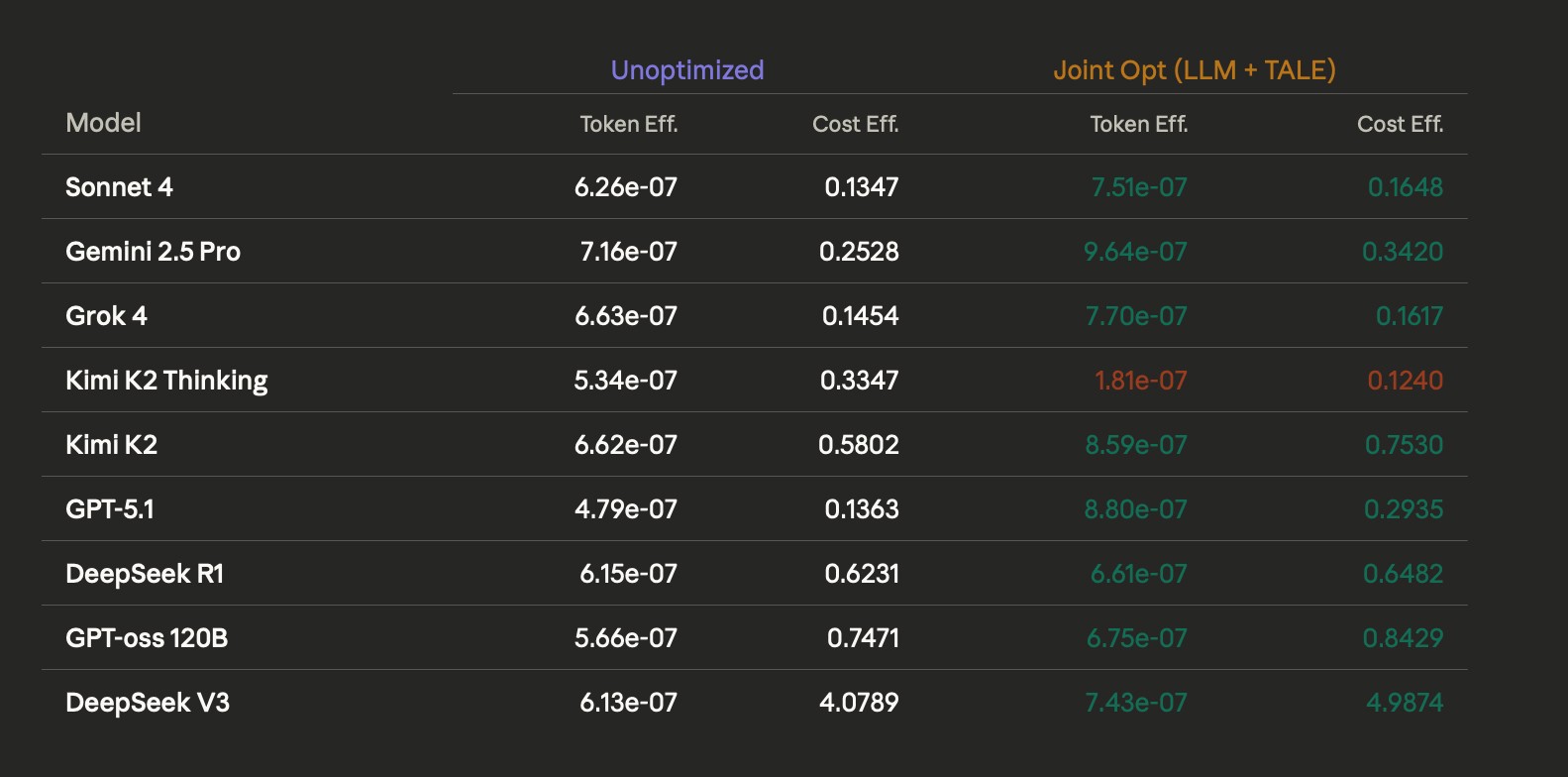

The power of synergy: joint optimization (LLMLingua + TALE)

The strongest results came from combining input and output optimization across the full reasoning workflow. We define total efficiency as:

Efficiency = F1/Input Tokens + Output Tokens

Optimizing for different LLMs as-a-judge using a combination of LLMLingua and TALE

By addressing both the reading (input) and thinking (output) phases simultaneously, 8 out of 9 frontier models showed significant improvement in their performance-to-cost ratio. GPT-5.1 emerged as the definitive winner in this conjunctive approach:

Token efficiency: achieved an 84% gain, rising from 4.79e-07 to 8.80e-07.

Cost efficiency: dominated with a 115% gain, skyrocketing from 0.1363 to 0.2935.

By leveraging these techniques in conjunction, we address the compounding costs of multi-stage pipelines. Because judgment tasks often burn significantly more tokens than ideation—for instance, jumping from ~6,000 tokens for ideation to over 17,000 tokens for judgment—optimizing both the input context and the reasoning output is the only way to achieve sustainable scale.

The back-of-the-napkin math: what’s your runway?

If you are a startup running at scale, the numbers are staggering:

For a software company (coding agent): running 1,000 developers on a coding agent with unoptimized reasoning loops might cost $1M/year in subsidized tokens. By applying our 2.5× efficiency gains, you reduce that “token burn” to $400K, instantly adding $600,000 back to your bottom line.

For a red teaming startup: a typical safety evaluation suite can cost $75 per run. Scaling this to 10,000 adversarial tests per quarter is $750,000. Our optimized pipeline can bring that same eval down to $20 per run.

Quarterly savings: $550,000

Yearly runway expansion: $2.2 million

The Centific accuracy loop

Optimization is a double-edged sword. If you compress too hard, the model deludes itself or loses its reasoning edge. We’ve seen this happen catastrophically with models like Kimi K2 Thinking when not handled with care.

The Centific Accuracy Loop addresses this risk. As a data-as-a-service leader, we don’t just hand you a script and cross our fingers. Our expert human raters provide a qualitative validation layer. We verify that every compressed prompt and every shorthand reasoning step preserves real-world answer quality, ensuring a +/- < 5% F1 delta.

We do more than reduce cost; we give you a human-verified accuracy delta you can trust. As models advance, the advantage will come from efficiency, not scale alone. Is your AI strategy built for the gym economics of 2025, or the sustainable scale of 2026?

Are your ready to get

modular

AI solutions delivered?

Connect data, models, and people — in one enterprise-ready platform.

Latest Insights

Connect with Centific

Updates from the frontier of AI data.

Receive updates on platform improvements, new workflows, evaluation capabilities, data quality enhancements, and best practices for enterprise AI teams.