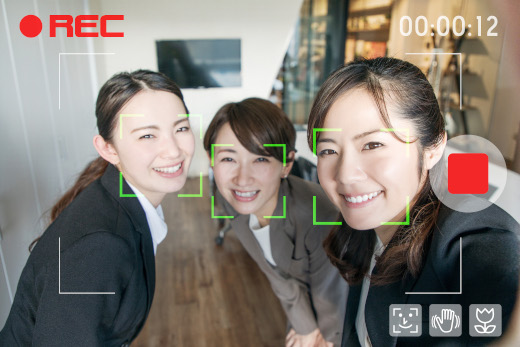

Next generation products will need to provide next level personalization. Facial and image recognition provides next level engagement to users. While AI models can recognize a face, most models need training to recognize different kinds of faces.

Voice-enabled applications are not just "nice to have" additions to your existing customer-facing sales channels. They are an entirely new way to engage and retain customers that will maintain profitable sales growth beyond the next wave of technological disruption.

Customers are more demanding than ever. Apps that are able to provide recommendations based on a users's location and interests will have the edge over their competition. Smart recommendations achieve 'stickiness' and brand loyalty.

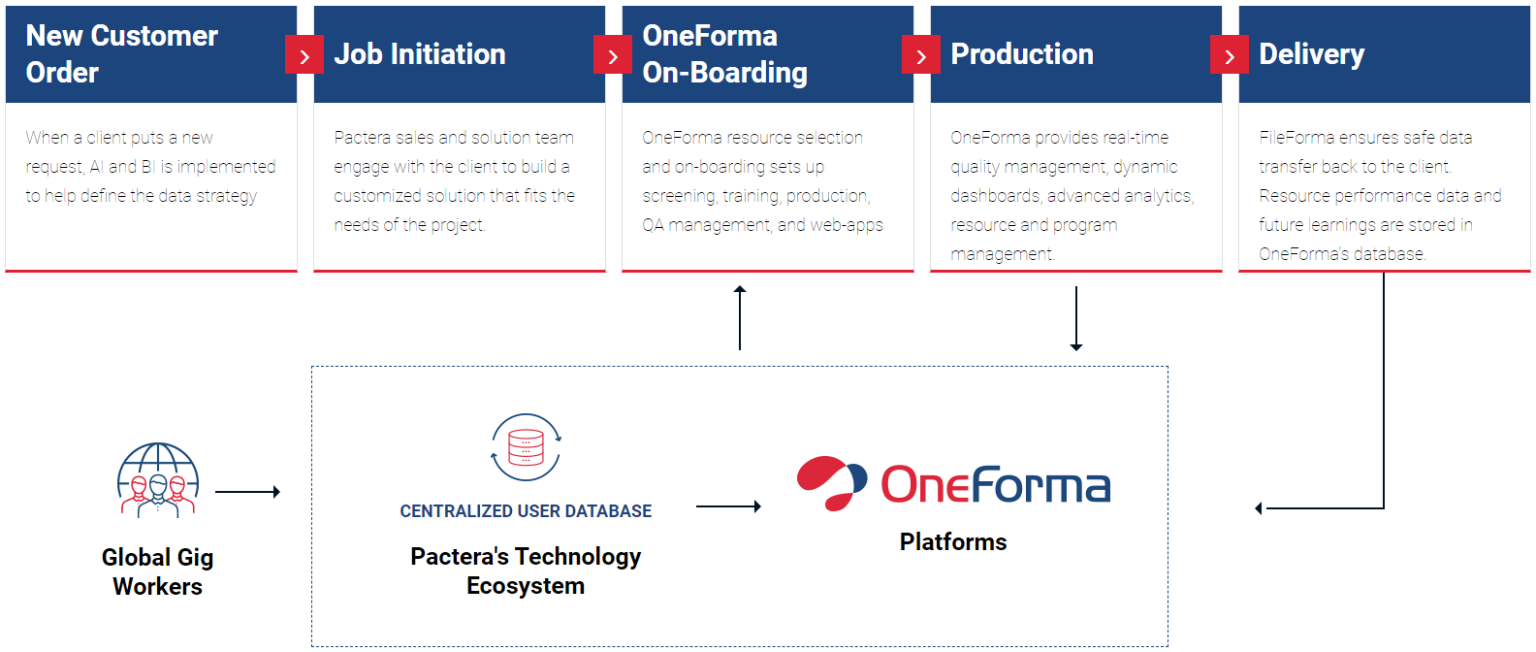

OneForma is an enterprise-grade and fully customizable platform to manage all of your ML and AI training data needs from recruiting/user management, workload distribution and quality management. Some of the major features of OneForma include:

Full scale resource recruitment for agencies, freelancers and crowd. Comprehensive profile management, self-qualification and training functionalities help to achieve and maintain service

Comprehensive knowledge base generated from global and local project forums help train resources, ease communication and facilitate knowledge transfer.

Production, workflow and allocation environments with interfaces for data processing, custom webapps, 3rd party and client toolset integrations via API provide hybrid solutions for complex AI enablement or localization programs.

Oversight and Management offers full project management functionality covering project creation, allocation, status & financial tracking and other associated management functionalities.

BI Insight & Client Layer portals provide excellent functionality for Project/Job creation (UI and API), Program & Financial Status Tracking and Project Communication.

Image labeling is instrumental for AI models that control self-driving cars. In these contexts, image recognition can mean the difference between life or death on the road.

OneForma has been used to train an Object Detection engine to detect different objects in a wide variety of images (1M+) within a large range of taxonomies. Better image recognition means more accurate road detection.

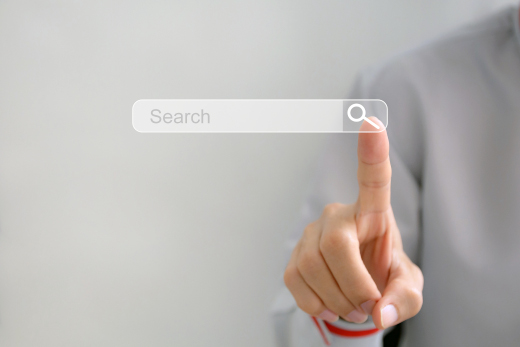

Query Categorization is a fundamental part of AI-driven search suggestions in a variety of commercial search engines, helping the user making their next search smoother, by not only providing suggested keywords, but also addressing the search into specific categories of content.

OneForma has been used for Search Engine Optimization to categorize a set of search queries (500k+) in a variety of languages into three different set of taxonomies. Optimizing such process means simpler but yet effective web searches for the final user.

Voice Recognition empowers a large part of user experiences from smart speakers to kiosks, as well as accessibility. Audio Transcription is a core part of the voice recognition training and allows these AI models to perform better and better over time. It consists of the transcription of an anonymized recording either recorded via OneForma by a native speaker or provided by the customer. The application of tags to markup specific events, such as the presence of background noise or secondary speakers.

OneForma has been used for Voice Recognition training in more than 50 languages, providing support to a wide range of products, from voice assistants to voice recognition in shows for accessibility. A large variety of accurate transcriptions means that end products can recognize a wide variety of voices, taking into account factors such as age, accent and the voice pitch.

Intent Annotation is the core training of every Natural Language Processing (NLP) model that fuels products like chatbots, smart assistants or kiosks. Utterances are analyzed and a human recognizes the “intent” of the user and the different entities that compose the utterance, such as the name of a food item, or the date/time of the appointment.

OneForma has been used for Intent Annotation in more than 10 languages to train a voice-enabled assistant with a constantly updating set of features. The OneForma and delivery teams have adapted the workflow to support weekly changes to the annotation process, constant knowledge updates and direct feedback to resources to enable a constantly evolving process in an efficient way. Intent Annotation is the core element of the AI vs. Human interaction and makes lovable AI-driven experiences possible.

One thing all the AI models have in common is data. Sometimes data can be available in the wild and might be enough to train small models during the initial phases of development. But when it is time to launch to a broad consumer base, datasets need to become 10 times larger for production. Datasets need to cover a variety of contexts, such as in voice-enabled products. A dataset must consist of recordings with different accents and ages. In vision-enabled models, there is the need of collecting a wide variety of photos with various settings, such as different cameras or light conditions.

OneForma has been used to collect photos used to train an OCR (Optical Character Recognition) model to recognize text in a variety of languages and in different objects (business cards, receipts, book covers, etc.) by using phtos taken with a smartphone, thanks to the participation of our large community of freelancers, the team collected more than 1 million photos in 3 months. Our Web interface was also designed to use OCR itself to pinpoint images that had an higher chance to not have text in the target language or for which the variety of content wasn’t enough when compared to previously uploaded photos.

Maps are now fundamental for a wide variety of operations from exploring unventured territories to delivering packages. So how do you ensure maps are always accurate and the best route is optimized to your destination? OneForma implements Map annotation projects, where our judges are asked to judge the information on the map compared to a query, and see if it’s accurate, or if that needs to be changed/improved.

OneForma has been used to create maps of malls, airports, and other large structures called “venues” by correlating the directory maps from their websites with aerial photography to create interior maps that can be overlaid exactly over the customer’s map platform. This creates even better personalization as the user can receive map directions not only to the destination building, but also inside the venue to reach it their final destination.